With today’s rapid pace of technological evolution, it can be difficult to identify which advances are here to stay, and which are simply fleeting trends. Nowhere is this more true than with displays.

In the last ten years, we’ve watched gimmicks including 3D, HFR (High Frame Rate), and the mindless grab for ever-greater Ks (16K TV anyone?) dominate our horizons, only to quietly fade away.

But if there’s one advance in display technology that’s here to stay, it’s HDR (High Dynamic Range). Content mastered for HDR displays may still be in a minority today, but this is certain to continue its rapid shift in the coming years. Soon it will be the rule rather than the exception.

How can we be so sure of this?

Perhaps it’s because the move to HDR is as significant as any shift in the history of motion imaging. And that includes evolutions such as the shift from 18 frames per second to 24, black and white to color, and standard definition to high definition.

We don’t need to be delivering HDR in order to benefit from it.

But there’s another reason we can be confident HDR is here to stay, which we’ll be returning to throughout this article: HDR isn’t simply about a more impressive image. Instead, it represents a fundamental improvement to the way we approach and talk about image mastering, one that better reflects the native language of light and photography.

This means that we don’t need to be delivering HDR in order to benefit from it. In fact, no post-production professional can ignore it. Today we’re going to look at my top five reasons why you should care about HDR even if you’re not yet delivering for it.

Before we dive in, let’s go over the basics.

Contents

What is HDR?

By now, most of us know that HDR stands for high dynamic range. But what does this mean in specific terms? At the simplest level, HDR standards are defined by two properties: dynamic range and color gamut.

Dynamic Range

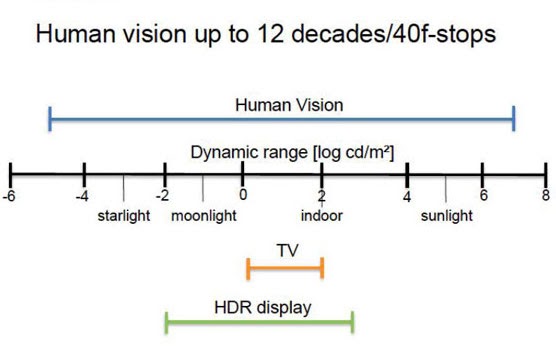

Dynamic range refers to the distance between the deepest shadow and the brightest highlight an imaging system is capable of capturing or reproducing — be it our eyes, a camera, or a display.

Our eyes perceive upwards of 30 f-stops of dynamic range. And in recent years we’ve seen high-end digital camera sensors get ever closer to matching this performance on the capture side. HDR simply applies this same concept to image reproduction, delivering imagery with a higher contrast ratio than traditional SDR (standard dynamic range) displays.

This is a long-overdue improvement, as the dynamic range of traditional displays and projection (4-6 stops) has lagged far behind that of our eyes and cameras for the bulk of imaging history.

Color Gamut

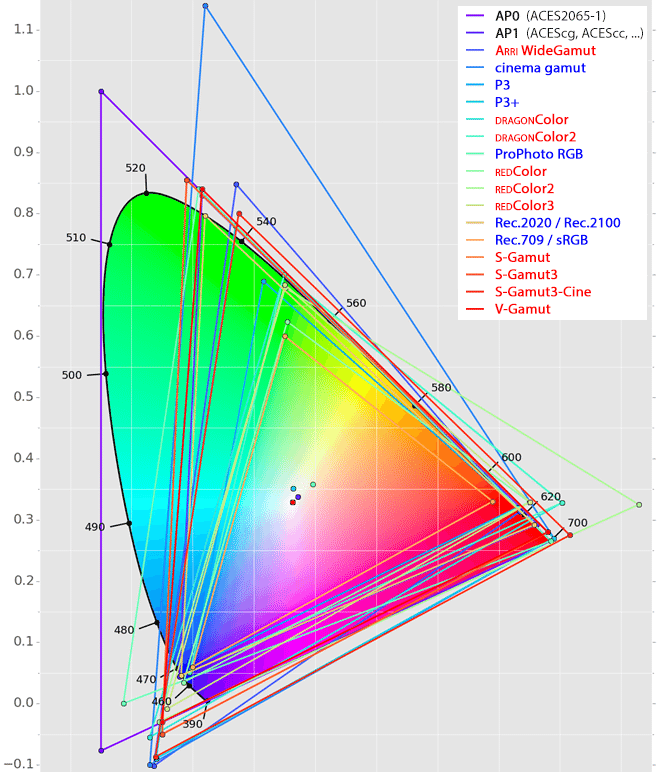

Color gamut refers to how much of the visible color spectrum an imaging system is capable of capturing or reproducing.

As with dynamic range, the effective color gamut of our image capture systems (cameras) has rapidly increased in recent years, while that of our image reproduction systems (displays) has made much slower progress.

Color gamuts such as DCI-P3 or Rec. 2020 offer a much greater range of possible colors for our images than traditional SDR gamuts, like Rec. 709. If we use these larger gamuts in combination with added dynamic range, we can make much more vivid, lifelike images.

Key Points

Technical details aside, the most important thing to understand about HDR is that it doesn’t represent an enhancement as much as the removal of an artificial limitation. In the realm of human vision and physical light, high dynamic range is a default condition, not an added gimmick.

It’s also worth noting that the point of delineation between HDR and SDR is fairly arbitrary, and can vary quite a bit depending on the standards and circumstances in question. But if we take the above “HDR-as-default-condition” concept as our foundation, we can instead think of dynamic range and color gamut in a more nuanced way, starting with the full dynamic range and color spectrum of human vision, and assessing where and how we’re truncating things in the process of capturing, mastering, and reproducing our images.

In an ideal imaging workflow, we would be able to capture, master, and reproduce images of equal or greater dynamic range and color spectrum as those of our eyes. Since no imaging pipeline currently lives up to that ideal, we simply want to evaluate where and how we’re falling short of it.

With these core concepts in place, we’re ready to answer our big question: Why should I care about working in HDR if I’m not delivering for it? The answer comes in five easy pieces.

1. Greater Control

Now that we’ve begun to think about HDR as our image’s default state, we can rightly place the burden of proof on any process that clamps or truncates our original dynamic range or color gamut.

The reality is that working with images in anything but HDR represents a needless compromise. For example, you might use Arri’s LogC to Rec. 709 LUT early on in your grading pipeline to normalize Alexa footage, or debayer R3D material into RedGamma4/DragonColor2.

In both cases, you’re moving from full dynamic range to limited dynamic range at the wrong moment, and with a method over which you have no control. No matter how great your skill, or how sharp your eye, every creative move you make from this point forward will be affected by this choice.

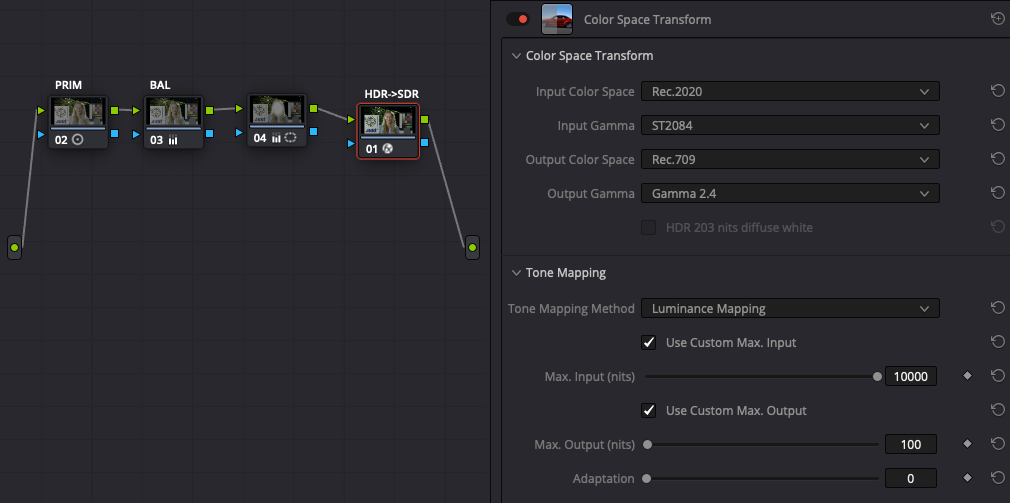

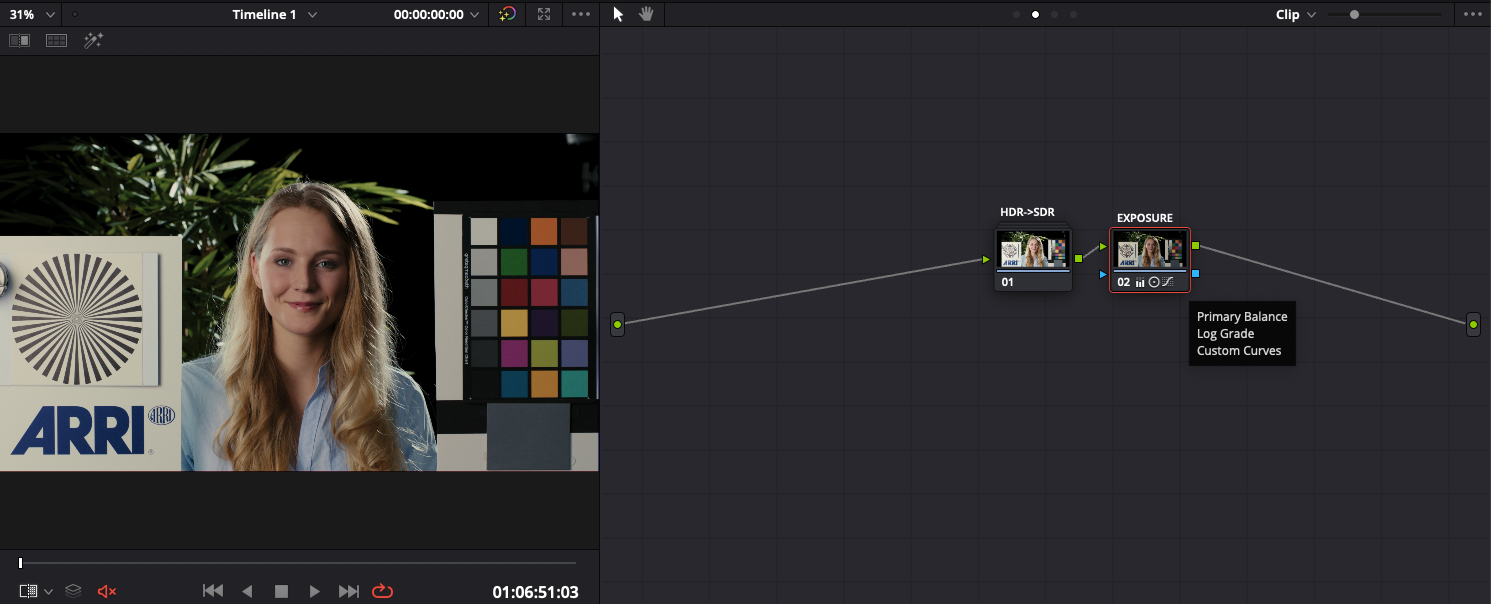

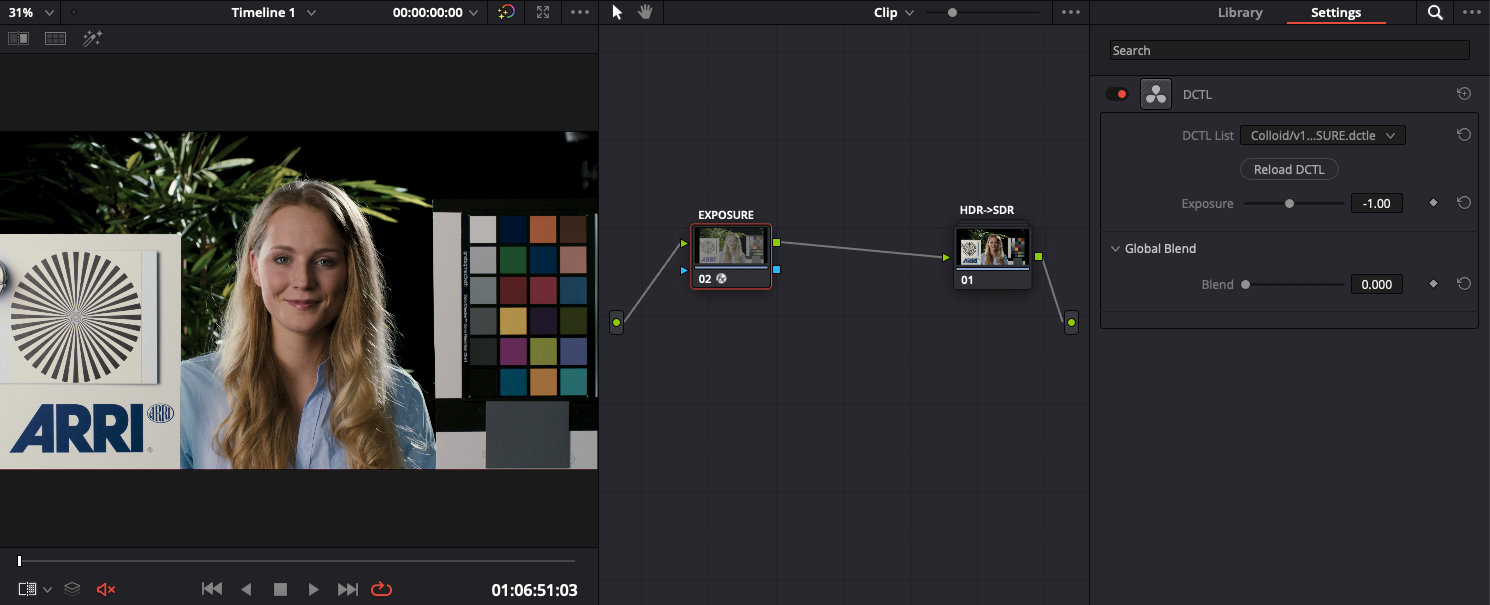

The far better alternative is to retain your image’s full dynamic range for the entirety of your grade, and to map down to your display’s dynamic range as the final step in your image pipeline, using a method you can fully understand and control, such as with Resolve’s Color Space Transform tool.

Why is this better? Because we’re explicitly controlling the entire journey of our image from sensor to screen, retaining full authorship by avoiding needless or ill-timed manipulations along the way.

2. Workflow Simplicity

Maintaining high dynamic range for as long as possible isn’t just about retaining control and authorship — it also allows for a simpler process with more intuitive tools and techniques.

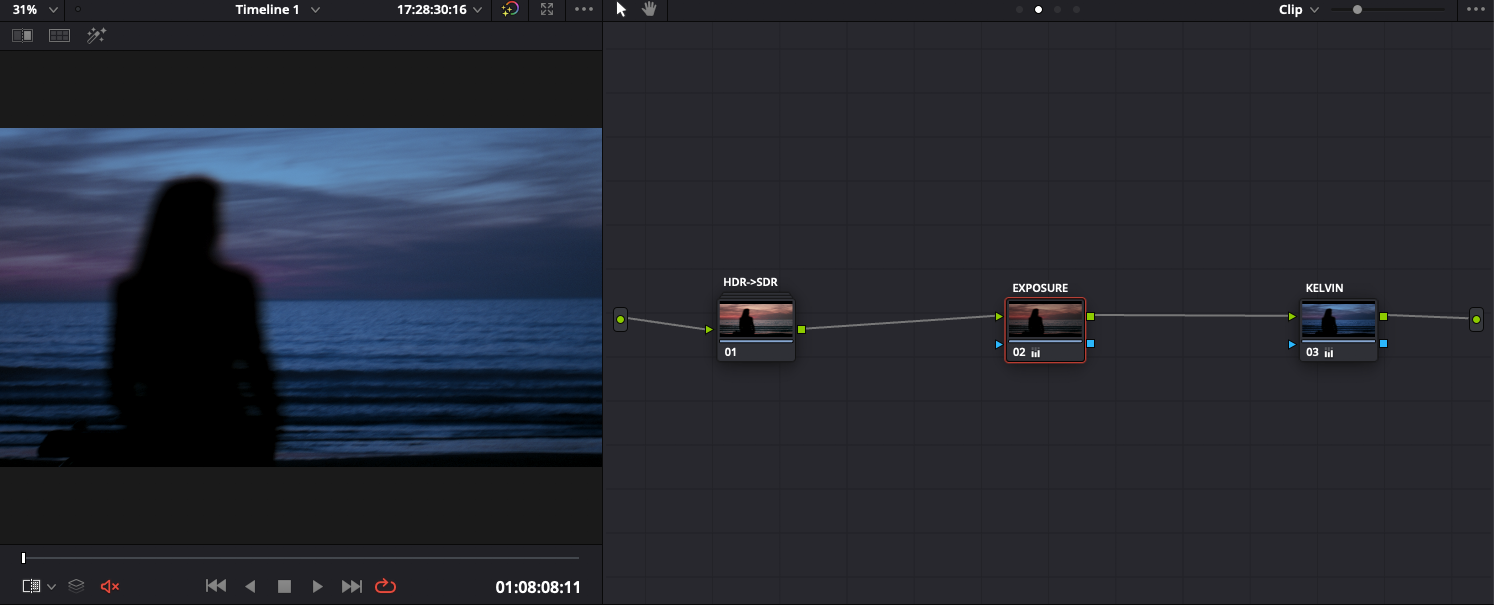

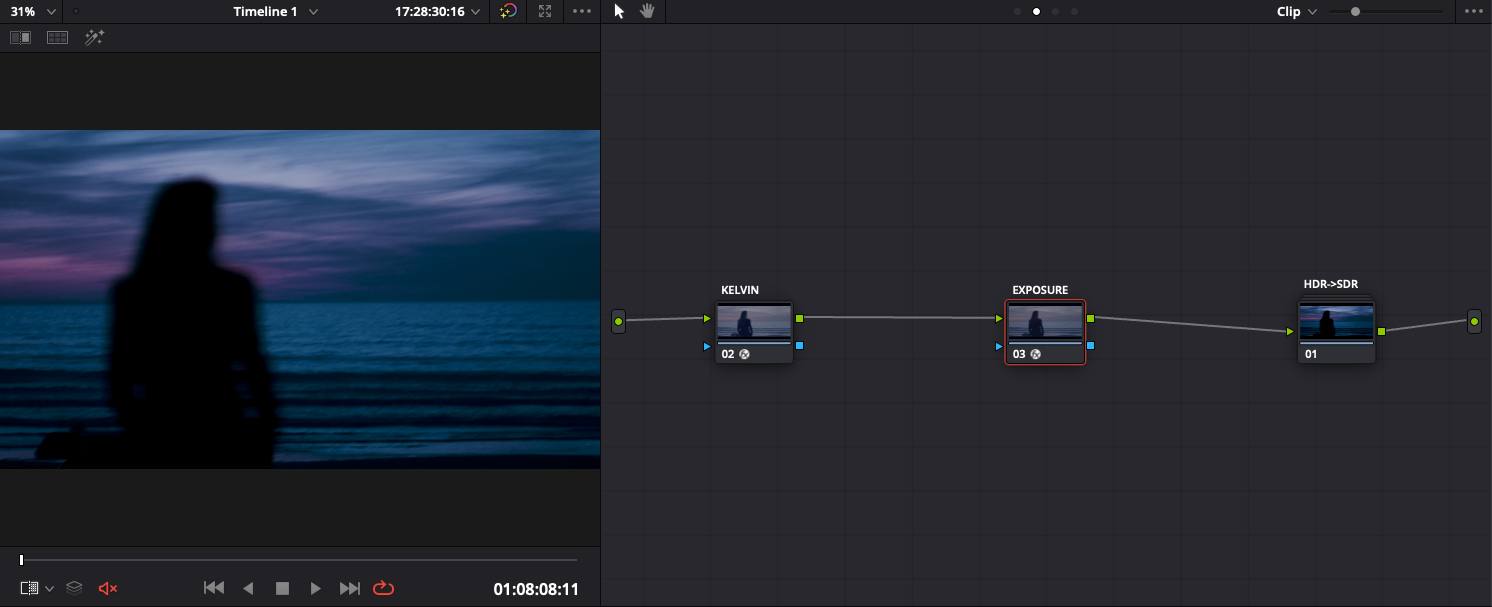

For example, in the scenario we just described of prematurely applying an Arri LogC -> Rec709 LUT at the outset of our grade, making a simple 1-stop exposure adjustment in the color grade requires a subjective combination of lift, gamma, gain, and/or curves manipulations. By contrast, making that same change upstream of our HDR to SDR transform allows us to do it with a single offset adjustment.

This same single-tool approach can be applied to all the foundational elements of a good grade: balancing color casts, shifting color temperature, and tuning contrast and saturation. This is no coincidence: by working with our image closer to its original state, we can take a photographic, rather than a graphic approach to shaping it. Furthermore, we eliminate many would-be problems before they ever even appear, because we’re meeting the image on its own terms, rather than imposing artificial limitations which create the need for complicated workarounds.

For example, the below image is overexposed by about a stop, and as a result, we’re seeing some unpleasant blown-out highlights. If we attempt to tackle this issue downstream of our HDR->SDR transform, we have no choice but to use narrow and complex tonal adjustments. Instead, we can opt to adjust the base exposure prior to our SDR transform, employing a method more akin to adjusting the aperture of our camera lens.

3. Smoother Collaboration

Another benefit of working in a photographic, rather than a graphic context is that it provides a common language for collaborators.

As a colorist, I’ve worked with many filmmakers who were highly astute about the traditional SDR-oriented tools of color grading — lift, gamma, gain, and the like — but many others who find these terms foreign and intimidating.

By contrast, virtually all filmmakers understand the medium’s native language of photography and physical light.

Depending on the filmmaker’s background, they may or may not be able to suggest a “subtle push of cyan into the lower mids,” but we all understand the concept of “cooling color temperature by a few hundred degrees.”

Because HDR is closer to the image’s original photographic state, it’s far more compatible with such thinking and methods. This provides a far more efficient and fruitful foundation for creative collaboration and experimentation.

4: Superior Quality

Using HDR workflows isn’t just about improving our process: it also leads to tangibly better results.

Any adjustments made on images in an HDR state tend to feel cleaner, more organic, and remain consistent across multiple shots and scenes. Again, this is no coincidence, because we’re working with the image closer to its native state. Think of it like baking a cake: will you have better luck perfecting the recipe before or after it comes out of the oven?

For example, let’s say we want to create a cool, under-exposed look for the below image. In the first frame, we’ll make our adjustment on the image in an SDR state, and in the second we’ll make the adjustment while still in HDR. Even though both are ultimately being transformed into SDR, the latter version is far more vibrant and lifelike.

5: Improved Flexibility

There was a time not long ago when you could reasonably assume that a piece of content was bound for a single outlet — usually either broadcast TV or a theatrical film print.

But today, even if you’re not yet delivering for HDR, the chances that your final masters will only ever go to one type of screen are virtually zero.

From mobile devices to TVs to theatrical projectors, our content is being viewed on a greater variety of screens than ever, and a key part of our job as post professionals is to ensure as consistent a viewing experience as possible across all of these formats.

So what’s the best way to do this? You guessed it: work in a unified HDR grading space from which we can accurately map to whatever display format our deliverable demands.

Taking this approach puts us in an ideal position to efficiently create additional masters with a minimum of subjective eye-matching — even for display standards that have yet to be invented! This allows post-production professionals to preserve their margins, as well as their creative intent.

HDR’s Bright Future

I hope I’ve left you with a compelling argument for incorporating HDR methodologies into your post workflows. Ultimately, I’m not here to convince you that HDR is a trend worth betting on, but rather that it offers a framework for more organic, collaborative, and efficient mastering of our images. Remember: HDR isn’t about an enhancement, but the removal of a limitation.

When we look back in years to come at the rise of HDR, a huge part of its legacy will be that it created a demand for post professionals able to think and work like filmmakers, rather than graphic artists. Those of us able to answer this call have an exciting future ahead, and those who refuse will eventually be left behind, regardless of what we’re delivering.

Oh, and in case you hadn’t already heard, Frame.io 3.7 supports HDR.