As filmmakers in the digital age, our data is our most valuable asset. If you lose your data, you lose your work, and possibly your professional reputation. And yet, for some reason, many editors and filmmakers don’t keep robust backups. The culprit is a simple psychological concept: backing up properly requires immediate, clear sacrifices (time and money), in order to protect against an uncertain future scenario. It’s easy to get lazy about backups.

Hard drives fail. All the time. If you haven’t had a hard drive die on you yet, you’ve probably not been in the business for very long. This article isn’t going to teach you a comprehensive strategy for how to handle your backups; but I will cover what I feel are the most important concepts, listed in priority order. First, go watch this video. It’s quite entertaining, and it also shows you how real this danger is.

Alright, here are the big mistakes that you need to avoid:

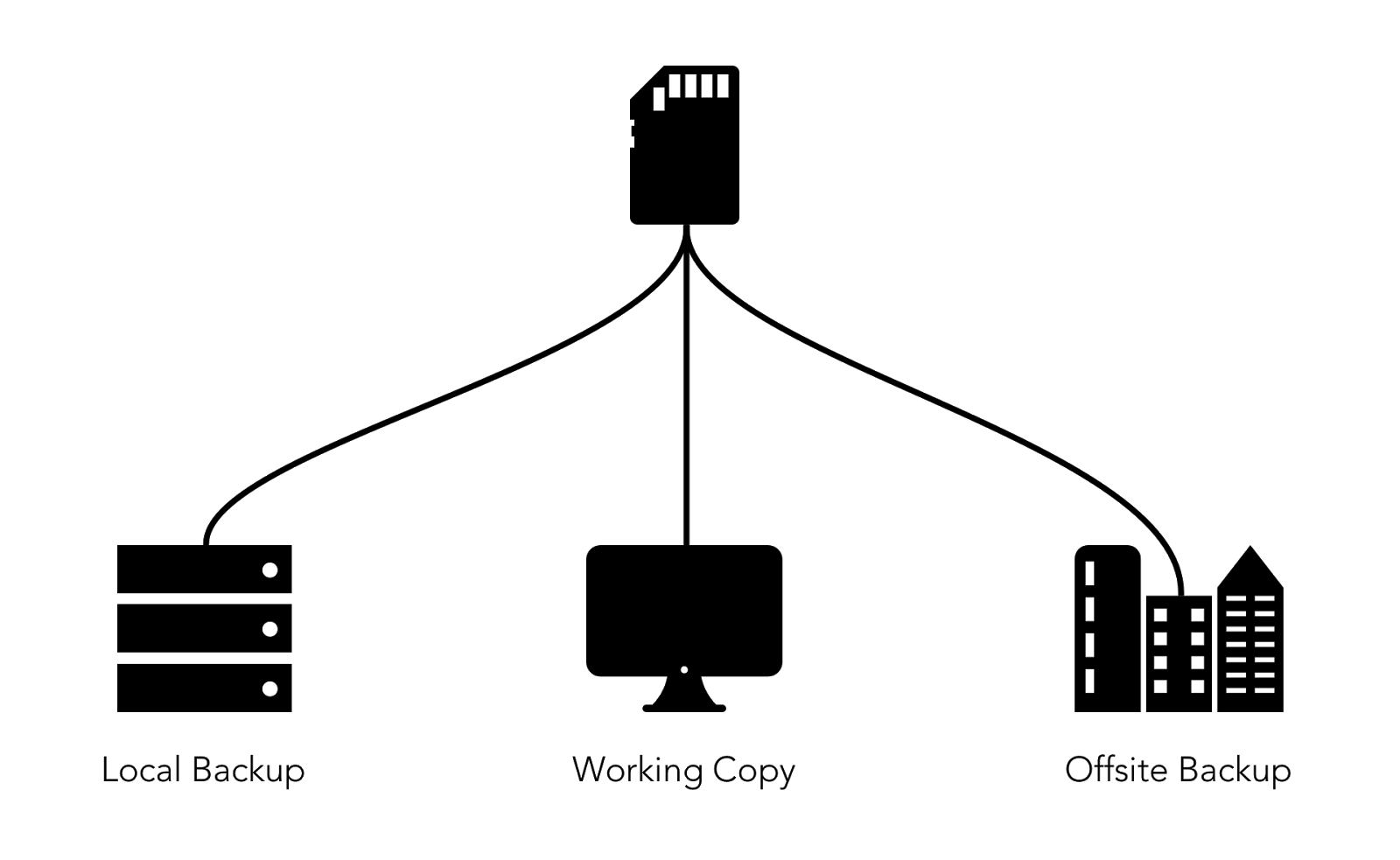

1. You only have one backup

Did you watch the Pixar video? They nearly lost all of Toy Story 2 (which would have killed Pixar) when both their main system and their backup were lost at the same time. Actually, they didn’t fail at exactly the same time. The backup failed at some unknown point in time, and they only discovered the issue when their main system failed. If your backups are offline, you won’t know if they fail “silently.” Simultaneous failure happens. Always keep multiple backups.

What are the chances that two drives will fail?

It’s nearly impossible to calculate the exact likelihood that you will have two drives fail simultaneously because each hard drive model is different, but it definitely happens. The Toy Story example is perhaps the most famous one, but there are hundreds of stories of people losing data because they only had two copies. It’s always a trade-off between the security of your data and the inconvenience or cost of safety precautions, and the trade-off will depend on the value of your data. If I’m just playing around, shooting a project for fun that I will probably never publish, then I don’t worry so much. If I am doing work for a paying client, however, I will follow all of the principles in this article. You get to make your own decisions, but after reading this article, you will at least be making informed decisions.

2. Your backups are connected

If you have two copies of a file, and they are connected to the same machine (even via a network), you should not consider one of them to be a backup of the other because a single issue could erase them both. A virus could delete all of the files that your computer has access to, or a strong power surge could fry all of the devices you have connected to the same electrical system.

Takeaway: Each of your backups should be separate from the others with no cables connecting them.

3. Your backups are all in one place

At my last company, a gang of very professional burglars cleaned out our entire office: laptops, monitors, and (you guessed it) hard drives. Tens of thousands of dollars worth of equipment. They took everything that looked expensive (and most of it was), and we never got any of it back. Luckily, we had off-site or cloud backups of all of our most important data. In spite of all of the warnings around the internet, I still see people keeping all of their backups in the same building. No matter how many copies of your footage you have, if they are all in the same physical location, your data is very vulnerable. A fire, a flood, an earthquake, even a powerful magnet can destroy all of your backups at once.

Takeaway: Always keep offsite backups.

4. Your RAID is your local backup

RAIDs are wonderful things. They give you extra transfer speeds, they allow you to combine multiple drives to simplify your setup, and they can also help protect against drive failure. It can be tempting to think that storing your data on your RAID gives you an automatic backup. The problem is that a RAID only protects against drive failure, which is only one of many ways that you can lose data. You could knock it over, or spill a cup of coffee into it. The logic board could fail, corrupting the data on both drives. Most RAIDs don’t even have the ability to recover from file deletion. If you click “delete”, your RAID will delete the file from both drives simultaneously. Some RAID configurations (like RAID 1 and RAID 10) provide robust protection against hard drive failure, which is wonderful, but consider that an added bonus. A RAID is still one device, and so it can fail all at once.

Takeaway: A RAID is great, but you still need two other copies of your data.

5. You’re using RAID 5

RAID 5 is a particularly volatile RAID configuration that can trick you into a false sense of security. Because of how RAID 5 works, double failures (where two disks have errors simultaneously) are easy to miss until it’s too late and your data is gone. RAID 5 sounds amazing on paper, but in practice, it doesn’t even offer the promised protection against a single drive failure. Essentially, what happens is that one file on one disk gets corrupted silently. Neither you nor the system realizes this, because the rest of the files on that disk look just fine. You carry on happily. Then, a different disk fails. You stick in a new disk and then the system discovers the previous failure (which may have happened months ago), and then your entire rebuild process fails. In the best case, you lose that one file, and the rest of your data is fine. In the worst case, you lose all of your data.

Takeaway: Don’t use RAID 5 unless you are comfortable losing the entire RAID.

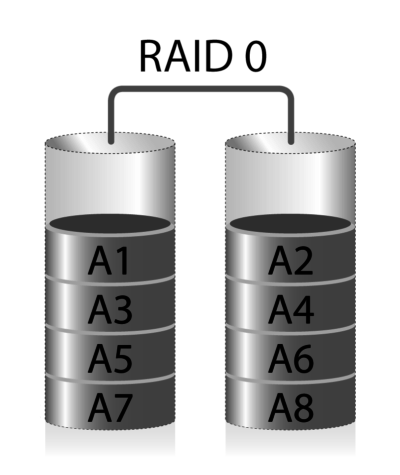

6. You’re using RAID 0

RAID 0 offers wonderful speed and simplicity: with just two drives, you can double your read and write speeds (RAID 1, on the other hand, only doubles your read speeds, not your write speeds). A RAID 0 is a striped RAID, which means that every file is broken up into small pieces which are written alternately to each hard drive.

This means that a RAID 0 with two drives gives you double the read and write speed of a single drive; but it also gives you double the chance of failure. If either drive fails, then you lose ALL of our data. A RAID 0 can serve a legitimate use case, but most people don’t realize that RAID 0 is extra-volatile. Not only does a RAID 0 not give you data projection, but it is twice as likely to fail as a single hard drive is. RAID 10 is an excellent alternative to RAID 0. It gives you fast reads and writes, but it also gives you the ability to recover from a drive failure. The downside is that it requires twice as many drives as RAID 0. That’s why RAID 0 is so tempting. It can be a great idea to use a RAID 0 because of its speed and simplicity, but if you do, you have to adjust your backup strategy accordingly, to compensate for the higher likelihood of a RAID 0 failure.

Takeaway: Don’t use RAID 0 unless you have a robust backup situation to recover from the higher likelihood of failure.

7. You don’t know which backups are where

You’re pretty sure that you have backups—lots of them. In fact, pretty much everything is backed up somewhere. You think. Maybe?

If you don’t know where your backups are, that can create all kinds of problems. You could accidentally overwrite a backup that you need to keep. Your memory could be wrong, and you might not even have the backups that you think you have. You may have misplaced the drive, without realizing it. You could end up with two backups on the same drive (I have done this…). Keeping track of your backups is very easy—there’s no need to get complicated. Just keep a list of each project and the locations of its backups. I highly recommend giving each hard drive a unique name or number and writing it on the drive itself. That gives you a quick and simple mapping system.

Takeaway: Keep track of your backups.

8. You’re not doing continuous project-file backups

Project files (the ones generated by your NLE) are so small nowadays that you’d be foolish not to keep running backups. If you use Dropbox or Google Drive (or another cloud backup services that uses watch folders), tell your NLE to put auto-save inside the backed-up folder. You can keep your main project file wherever you like, but your backups will be synced to the cloud. If you spill coffee on your computer, you still have all of your work saved online.

In Premiere: You can change the autosave location in Project Settings under Scratch Disks. In FCP X: Go to Library Properties, under Storage Locations, click Modify Settings and then choose the backups folder. Media Composer and Resolve don’t allow the user to specify the location of autosaves—they are kept in a particular folder that can’t be changed. You could, however, keep the main project file itself in Dropbox, and it would upload a new version every time you saved the projects. Or you could use scripting programs like Applescript or( Powershell on Windows machines) to regularly copy the auto-backups from the default folder into your Dropbox/Drive folder.

Takeaway: Point your auto-saves to Dropbox, Drive, or another cloud backup.

9. You don’t mount your memory cards in read-only mode

This tends to be a problem more for shooters and camera people than for editors, which is why it’s not at the top of this list, but I’m including it here because many editors do also shoot and have to deal with juggling memory cards. When you take the memory card out of your camera, you are in a very vulnerable state from a data perspective. Unless your camera can simultaneously record to two different memory cards, you only have one copy of your data in existence. Your first action should be to make a backup of that card, and when you do so, you should always mount it as read-only, if possible. This is one of the reasons why I love the SD card format. The convenient switch on the side of the card allows you to lock the card to read-only mode as soon as you remove it from the camera. Read-only mode protects you from errors that could damage the files on your card if you suddenly lost power or accidentally pulled the card out of your computer. It also protects you from accidentally deleting files from your memory card when you thought you were deleting files from a different folder your computer. If you’re savvy with the terminal, you can mount any hard drive or memory card in read-only mode with these commands on a Mac. First, figure out which disk you’ve just plugged in.

diskutil list

And then unmount it and remount it with the readOnly flag.

diskutil unmount /dev/yourdisk

diskutil mount readOnly /dev/yourdisk

It’s also possible to mount a drive as read-only on Windows, but it’s a bit more complicated.

Takeaway: Mount your memory cards as read-only if possible.

10. Your memory card is your backup

It can be very tempting to think of your memory card from your camera as a backup of your footage, and technically it is. For now. The problem is that, for most people, you need to keep using those memory cards, which means that you have to keep erasing them. If you have enough memory cards that you can afford to set them aside for long periods of time, and if you have a robust system for keeping track of exactly what footage is currently on each of your cards, then it can be okay to consider your memory card to be a backup of your footage. But in my experience, that is a rare situation and occurs mainly on higher-budget shoots.

Takeaway: Your memory card should be a temporary backup only until you have time to copy to hard drives.

11. Your backups are in the cloud, and the download speed is slow

There are some excellent cloud services that allow you to back up very large amounts of data, and some people use them instead of backing up to hard drives. That can be a good strategy in some cases—but it’s important to consider the time it would take to download your files if your local copies fail. You might be able to store your 2TB of project data in the cloud, but if you can only download that data at 10mbps, it will take you 18 days to download it all! (Use these formulas to calculate the download time). If you’re on a tight schedule that could cause huge problems.

Takeaway: If you can’t download your files quickly, only use cloud backups for long-term archiving.

Roll your own

At the end of the day, unfortunately, many people only start implementing robust backup practices once they’ve lost important data. I personally have had hard drives fail on 5 different occasions, and that doesn’t count the number of times that I’ve accidentally deleted files that I needed. These are recommendations, not rules. You can break them if you need to, but consider carefully before you do. Consider the value of the data that you are protecting. If your professional reputation is on the line, then handle your files with care.