In the early days of digital cinematography, it seemed like 2K might be enough for digital cinema and HD would be a decades-long standard for broadcast.

But soon enough, Hollywood studios started finishing at 4K. And streamers like Netflix and Amazon Prime made 4K a new delivery standard for the era of peak TV. As DPs learned how 4K cameras could improve the quality of 2K deliverables, the benefits of shooting for higher resolution delivery started to become clear.

And then RED Digital Cinema introduced 5K, 6K and 8K sensors, Sony launched the 6K Venice, and ARRI stepped up with the 4.5K Alexa LF and the 6.5K Alexa 65.

To some degree, it makes perfect sense: more pixels means more detail, which means better pictures. Right?

But then Blackmagic Design forced the question last year with the introduction of the URSA Mini Pro 12K: how many Ks do we really need?

To explore the question, we interviewed working cinematographers to find out how they’re already using 12K as a tool, and we sat down with Blackmagic Design to hear their rationale for pushing capture resolutions even higher.

What is it good for?

“The idea of 12K isn’t that everyone should shoot 12K,” says Bob Caniglia, Blackmagic’s director of sales operations in North America.

“The idea is you should use 12K for the situations that require it.”

So what kind of situations need almost ten times the resolution of UHD?

Think about the 20-foot-high LED walls that are used for Mandalorian-style productions, where multiple shots are stitched together to create an ultra-high-resolution wraparound virtual set. Or massive outdoor screens that require similarly huge amounts of detail to make an impact.

Even if you only expect to deliver at lower HD or UHD resolutions, more pixels give you more options.

If you shoot at 12K with a handheld camera, the extra resolution gives you plenty of extra margin in post for stabilization and reframing, yielding a final result that mimics a well-composed Steadicam shot.

And if you shoot a large scene with a single 12K camera—something like a wide shot of a landscape or football field—you can zoom into specific areas of interest and extract great-looking frames that still have full 4K resolution.

In essence, shooting 12K is like having multiple cameras on location for the price of one (though, of course, lens choice will impact the creative possibilities of the shot tremendously.)

“It’s also an 80 megapixel still camera,” notes Blackmagic lead camera specialist Tor Johansen. “You can do very large prints, up to 42 inches, and still be super clean and sharp.”

But even if 12K is technically advantageous, smart DPs are always wary of workflow requirements. You won’t save much time shooting if the 12K files require way more storage than you’ve budgeted for, or if the processing requirements slow post-production to a crawl.

As it turns out, a 12K format can be manageable and introduces some compelling new creative capabilities.

Beyond the Bayer filter

Let’s start by taking a look under the hood of the Ursa Mini Pro 12K.

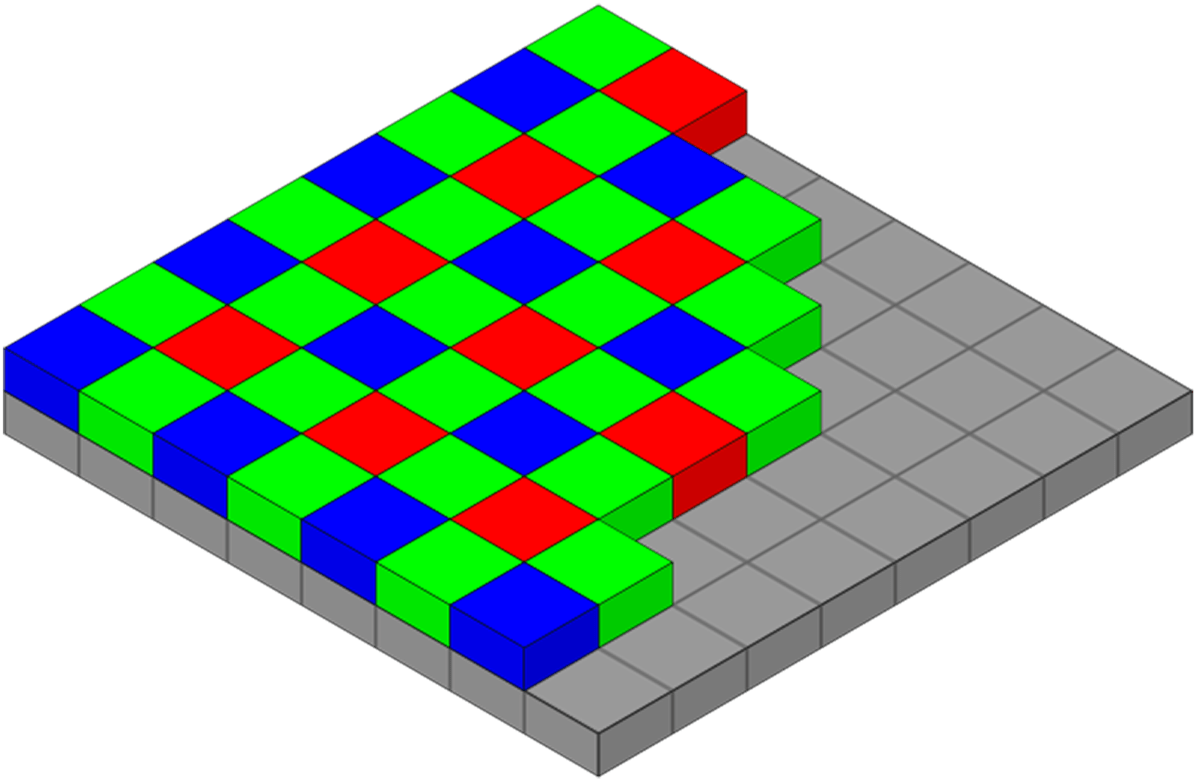

Blackmagic Design chose a brand new sensor design for the Ursa Mini Pro 12K that, uniquely, doesn’t use the Bayer Pattern Filter.

What does that mean? The new sensor captures equal amounts of red, green and blue light.

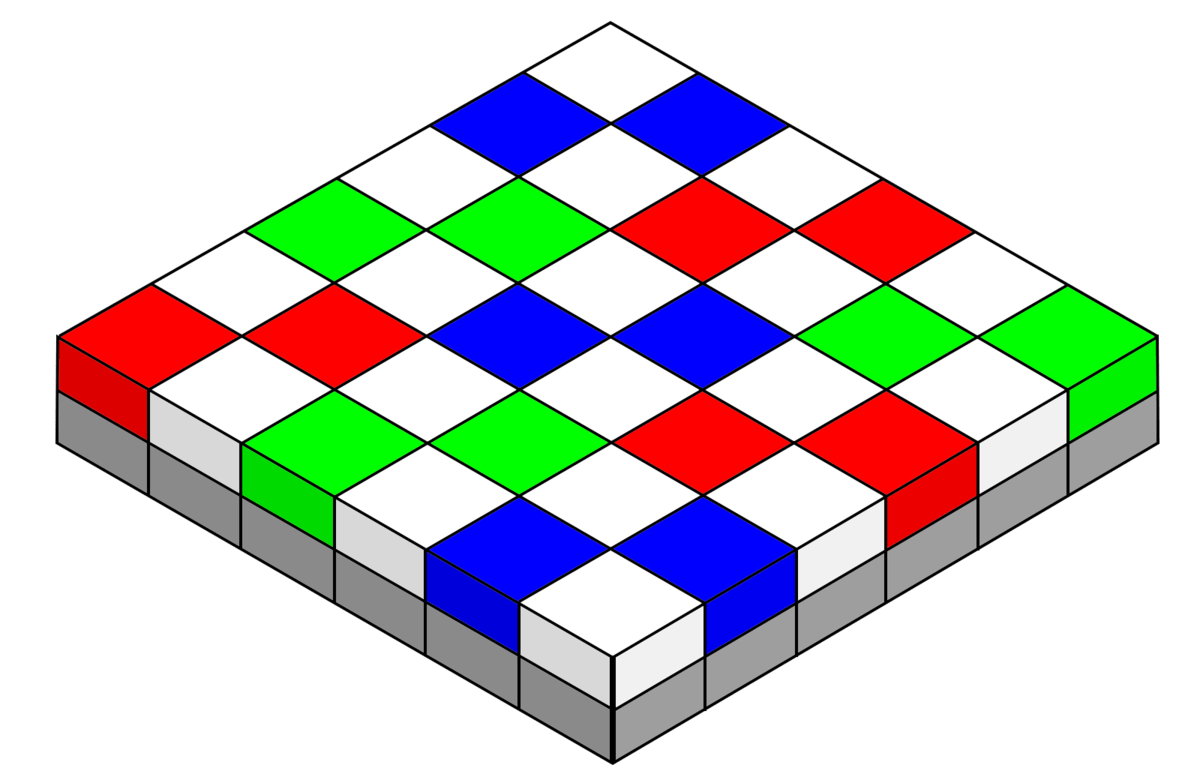

The Bayer color pattern (used by most digital cameras on the market) was devised to match the physiology of the human visual system. Simply put, our eyes are more sensitive to green light than other wavelengths, so the Bayer pattern has two green-sensitive photosites for every one red or blue photosite.

But BMD’s 12K sensor uses a different color filter array (CFA) that captures “symmetrical” color information, which increases color resolution. It also simplifies the mathematics involved to the point where images can be scaled on the sensor itself.

So what does that mean in the most practical sense?

It means that, whether you decide to shoot in 12K, 8K, or 4K, the image will be sampled from the sensor’s full capture area, rather than from a smaller, windowed area. You’ll get exactly the field of view you’d expect from a given piece of glass and a Super 35 sensor, no matter which capture resolution you choose.

Anyone who’s ever suddenly realized on set that their widest lens isn’t wide enough to capture a scene in a camera’s cropped or windowed mode will appreciate just how useful this is. It allows the camera to be more usable for a wider range of projects.

At the same time, scaling the image on the sensor itself actually reduces the amount of data coming off of the sensor, meaning the camera can record at higher frame rates across the board—60fps at 12K and 120fps at 8K.

(It’s worth noting that there is a Super 16 mode that lets you record at up to 220fps from a cropped 4K portion of the 12K sensor. This might come in handy if you need those high frame rates and don’t mind the narrower field of view of the windowed image.)

Small photosites, big dynamic range

One more thing about that new color filter.

While larger photosites generally allow the collection of more light (which means more sensitivity and more dynamic range), squeezing higher-resolutions onto sensors means you need smaller, more tightly packed pixels. And that’s a significant trade off.

This inherent compromise is one of the stated reasons ARRI held out so long before releasing cameras that record at 4K and higher resolutions: the Alexa’s 3.4K sensor had reached a sweet spot between sharpness and sensitivity, trading sheer resolution for great low-light performance and low noise.

To combat that effect, the URSA Mini Pro 12K’s CFA includes not just red, blue and green photosites but an equal number of “white” or transparent photosites that allow it to capture additional information about light intensity.

BMD doesn’t disclose all the details about the exact color configuration, but let’s think of it as a 6×6 grid with six red, six green, and six blue photosites along with 18 white photosites that collect more light than their color-filtered counterparts.

It’s important to note here that the “RGBW” grid isn’t a new idea. But Blackmagic’s use of the tech might be.

They claim that combining the luma-only photosites with color data from the RGB photosites helps maintain a full dynamic range while keeping photosites as small as possible. And with a pixel pitch of just 2.2 microns and 14 stops of dynamic range on the new sensor, we’re inclined to believe them (for contrast, the URSA Mini Pro 4.6k’s sensor has a pixel pitch of 5.5 microns with 15 stops).

Of course Blackmagic isn’t using actual magic in their hardware design. Other companies are already experimenting with different CFA tech.

For example, Sony’s “Quad Bayer” filter arranges pixels in 2×2 arrays of a single color, rather than alternating colors photosite by photosite. Samsung calls the same technology “Tetracell.” And these rival technologies have already been on the market for several years

But rather than detracting from BMD’s claims, this competition helps validate them. There seems to be a growing recognition of the Bayer pattern’s limits, and a willingness by manufacturers to experiment with new types of CFAs that provide specific technical advantages.

In the case of BMD’s new 12K sensor, that means better color fidelity and greater dynamic range at even higher resolutions.

Making it manageable: Blackmagic RAW

So despite the increased overall resolution, BMD’s 12K sensor captures an image that maintains a good amount of dynamic range. But how do you handle that amount of data?

That’s where Blackmagic RAW comes into play.

This new codec is designed to relieve some of the pressure on post-production workflows by handling part of the raw processing inside the camera itself.

Now if that statement sets off any alarm bells in your mind, pause and take a step back for a second. While it’s true that Blackmagic RAW isn’t a completely raw format, there are some considerable benefits this approach provides.

First, because some of the heavy computational lifting is partially handled at the time of capture, many post workflows can adopt 12K without massive IT upgrades. The footage can be played back on more standard computers, rather than being limited to beefy workstations.

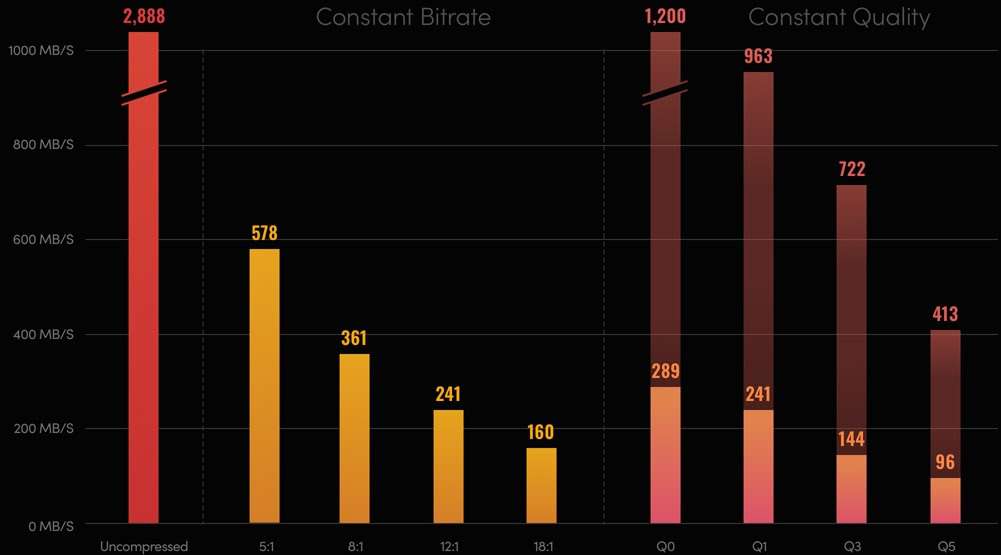

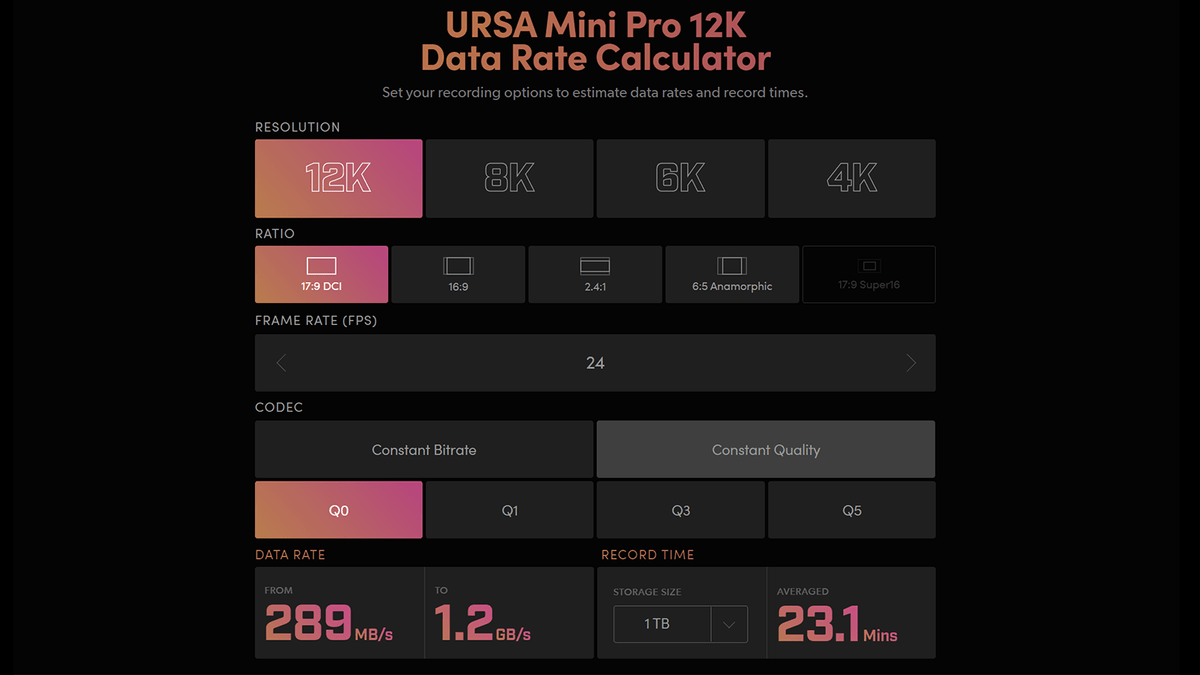

Second, Blackmagic RAW significantly reduces the storage requirements for capture media and on-set backups (relative to other codecs). So you can start shooting with one or more 12K cameras without huge amounts of expensive, ultra-fast flash storage, or without waiting obscene amounts of time just to transfer and backup files.

“I’ve experimented with 18:1 [compression], and I’m really shocked at how clean it is,” says Johansen.

“I’d say most users are doing 8:1. A lot of people want the lowest compression for the best quality, but we’re not seeing the difference. 5:1 compression is 578 MB/sec, but 8:1 is roughly half of that [361 MB/sec], and the picture quality is just as good.”

So the Blackmagic RAW codec is the backbone that enables an ecosystem that includes the Ursa Mini Pro 12K hardware, the company’s color science, and its Resolve color-grading software.

Or, as Caniglia puts it, “When you have full control over each of those components, you have a lot more control over how it’s going to look at the end.”

Practical applications

12K capture is already shaking up the camera world, with DPs gravitating to the high-res format for both technical and aesthetic reasons.

VFX cinematographers are among the early adopters, since increased resolution translates directly to higher-quality imagery, especially when creating blue-screen or green-screen composites.

David Stump, the chairman of the ASC’s camera committee and author of Digital Cinematography: Fundamentals, Tools, Techniques, and Workflows, cites Nyquist theory, which has long dictated certain design decisions in digital cameras.

In a nutshell, the Nyquist Limit defines the minimum number of pixels required for a digital sensor to properly sample detail in an image.

Because it takes two pixels to create a visible line pair, or unit of detail, in an image, Nyquist tells us that a high-definition sensor with 1920 x 1080 pixels is able to resolve no more than 960 line pairs or “cycles” horizontally and 540 vertically.

Professional cameras incorporate an optical low-pass filter that’s calibrated specifically to eliminate any detail above the sensor’s Nyquist limit to avoid unnatural artifacts such as aliasing and moiré.

A camera with a much higher resolution sensor can allow much finer levels of detail through before the filtration kicks in. And sampling at a higher frequency—with a higher horizontal and vertical sensor resolution—yields greater accuracy.

“The more slices you cut a picture into, the more accurately you can reproduce it,” Stump says.

“If I have higher-resolution images, I have cleaner mattes from blue-screen or green-screen composites. More sampling means finer matte edges when you’re cutting images apart and reassembling them as composites.”

Another big benefit is the 12K frame’s ability to contain a jittery image while leaving plenty of margin on all sides for digital stabilization.

“If you’re driving down a rocky road shooting background plates for an image and the cameras are bouncing all over the place, you can punch into the image and stabilize it,” Stump explains.

“You haven’t decimated the resolution of the image because you have so much to spare. Similarly, you have tremendous flexibility to reframe and resize things to fix any operating errors that are made on stage.”

At Stargate Digital, where DP Sam Nicholson, ASC, was an early advocate of the “virtual backlot,” it’s not so much a question of whether they shoot at 12K as it is how many 12K cameras they use on a shoot.

“I think my record is shooting with eight of them at once,” Nicholson says. “Generally, four of them is a pretty standard lift right now.”

You have tremendous flexibility to reframe and resize things to fix any operating errors that are made on stage.

One recent shoot saw four 12K cameras on a stabilized driving rig capturing a 360-degree environment for virtual production. Another project involved a fleet of fifteen 12K and 8K cameras capturing environments from a traveling subway train. Each take was 30 minutes and generated around 150 TB of footage.

Nicholson’s philosophy is to shoot with the most resolution and dynamic range possible so that the footage can be pushed and manipulated aggressively, as needed, in post-production.

But you don’t have to be a VFX DP to get a decent ROI on 12K. Kevin Garcia, video partner and film director with MixOne Sound, used the URSA Mini Pro 12K to shoot a 2021 Grammys pre-show performance.

“It’s been pretty freaking incredible,” he says. He’s equally likely to shoot a standard band interview in 12K, which saves considerable time and effort compared to a multi-camera set-up by allowing him to punch in to different 4K sections in the larger 12K frame.

“I did an interview with a five-piece band,” he says. “I didn’t want to set up three cameras, so I decided, ‘I’m not going to.’ I set up one [12K] camera and punched-in to 4K, and it was still vivid. It didn’t look upscaled or downscaled, and it turned a one-camera shoot into five.”

For Garcia, the main argument against shooting everything in 12K is the storage requirements. Recording live performances that run for an hour or two without a break at high resolution can fill discs quickly. But even 8K offers compelling advantages for HD and 4K deliveries.

For Phoenix Sessions, a streaming concert series featuring performances by Jimmy Eat World, he shot 8K footage with four Ursa Mini Pro 12Ks rolling alongside two Ursa Mini Pro 4.6K G2s. The higher-res footage gave him opportunities to reframe shots or even crop the image to create new angles in the edit. Even just downscaling the image to 4K yields excellent results.

When more is better

So to recap, how many Ks do we really need?

Well, it depends on what you’re trying to do.

If you need to create a high-resolution backdrop for virtual production or a convincing 360-degree VR or AR environment, you need more pixels. If you need exceptional clarity and color resolution for an HD or UHD deliverable, you need better pixels. And since you need to turn everything around on time and on budget, you need an efficient post-production pipeline.

As BMD has shown us, you can have more and better pixels without destroying your workflow. Thanks to the hyper-efficient codecs, 12K brings filmmakers more options, without grinding post-production to a halt.

While Blackmagic may be the first company to release a 12K camera, other manufacturers are constantly working behind the scenes to improve color resolution, reduce noise, and extend dynamic range.

The new Exmor R sensor in Sony’s PXW-FX9 cinema camera, for example, downsamples from the sensor’s native 6K resolution (in this case, 6008 x 3168 pixels) for 4K output, yielding more accurate color reproduction than a native 4K sensor.

It’s impossible to imagine that Sony and other camera vendors aren’t already developing their own hardware and software to deliver manageable workflows at ultra-high resolutions that match or exceed BMD’s 12K offering.

But what about delivery standards? Can we expect that streaming and broadcast resolutions have plateaued at 4K? Even if there is a pause, don’t expect it to last long. Japanese director Kiyoshi Kurosawa’s latest drama already broadcasts at 8K, and NHK has committed to 8K live broadcasts for the Tokyo Olympics in the summer of 2021.

As filmmakers, we should demand the highest fidelity possible at every step of the creative process. We owe it to ourselves, to our clients, and to our audiences to deliver the best possible images—the ones that most clearly and completely capture the stories unfolding in front of our cameras—using the most versatile tools at our disposal.

Imagine if Hollywood had decided that 35mm film resolution was “enough.” The cinema and filmmaking landscape of today would be radically different (and likely less creative).

Not to mention the profound impact new mediums and tools have on cinematic storytelling.

The rich color, lush textures, and immersive panoramas captured in epics like Lawrence of Arabia, Ben Hur, and Kenneth Branagh’s gorgeous take on Hamlet were all enabled by 70mm celluloid.

And then there are the indomitable filmmakers who reach for IMAX, to create some of the most awe-inspiring experiences in cinema. Think of the vertiginous sweep of the Burj Khalifa set piece in Mission: Impossible – Ghost Protocol, or the visceral handheld panoramas of Dunkirk.

Each of these incredible cinematic moments was possible because visionary filmmakers insisted on using new tools and techniques that enabled the very highest standards of imaging quality available to them.

So while you might not need to jump to 12K capture right away, don’t get too comfortable with 4K. Because there will be more Ks in our future—even more than 12K.

And that’s a good thing for filmmakers.