What a year! The billion-dollar blockbusters are back! Media giants continue to conglomerate! Streaming services scramble for subscribers! And an indie movie went to eleven (Oscar nominations, with seven wins) using Adobe Creative Cloud and Frame.io!

We’re back with the annual Frame.io Oscars Workflow Roundup, covering the Best Picture and Best Editing nominees—who this year will at least get their moment in the spotlight during the broadcast.

You complained, they listened, order was restored. (Now, who can we complain to about going back to Daylight Saving time on Oscars Sunday? Glam squad…where’s my concealer?!)

If this is your first time reading our Oscars roundup, our goal is to give you a digest that highlights the arduous process of creating an award-worthy movie. If you’ve read it previously, we thank you for joining us for the sixth consecutive installment.

Either way, we hope you’ll find something in it to awe, inspire, or inform you—because even while researching and writing it, we experience all three.

Contents

And the nominees are…

- All Quiet on the Western Front

- Avatar: The Way of Water

- The Banshees of Inisherin

- Elvis

- Everything Everywhere All at Once

- The Fabelmans

- TÁR

- Top Gun: Maverick

- Triangle of Sadness

- Women Talking

By the numbers

Obviously, this year everything from production costs and crew sizes to box-office receipts have varied wildly. We’ll dig a little deeper further on, but let’s just say that the industry is healthy and thriving—despite the headlines surrounding consolidations, layoffs, red ink, and studio-executive changes.

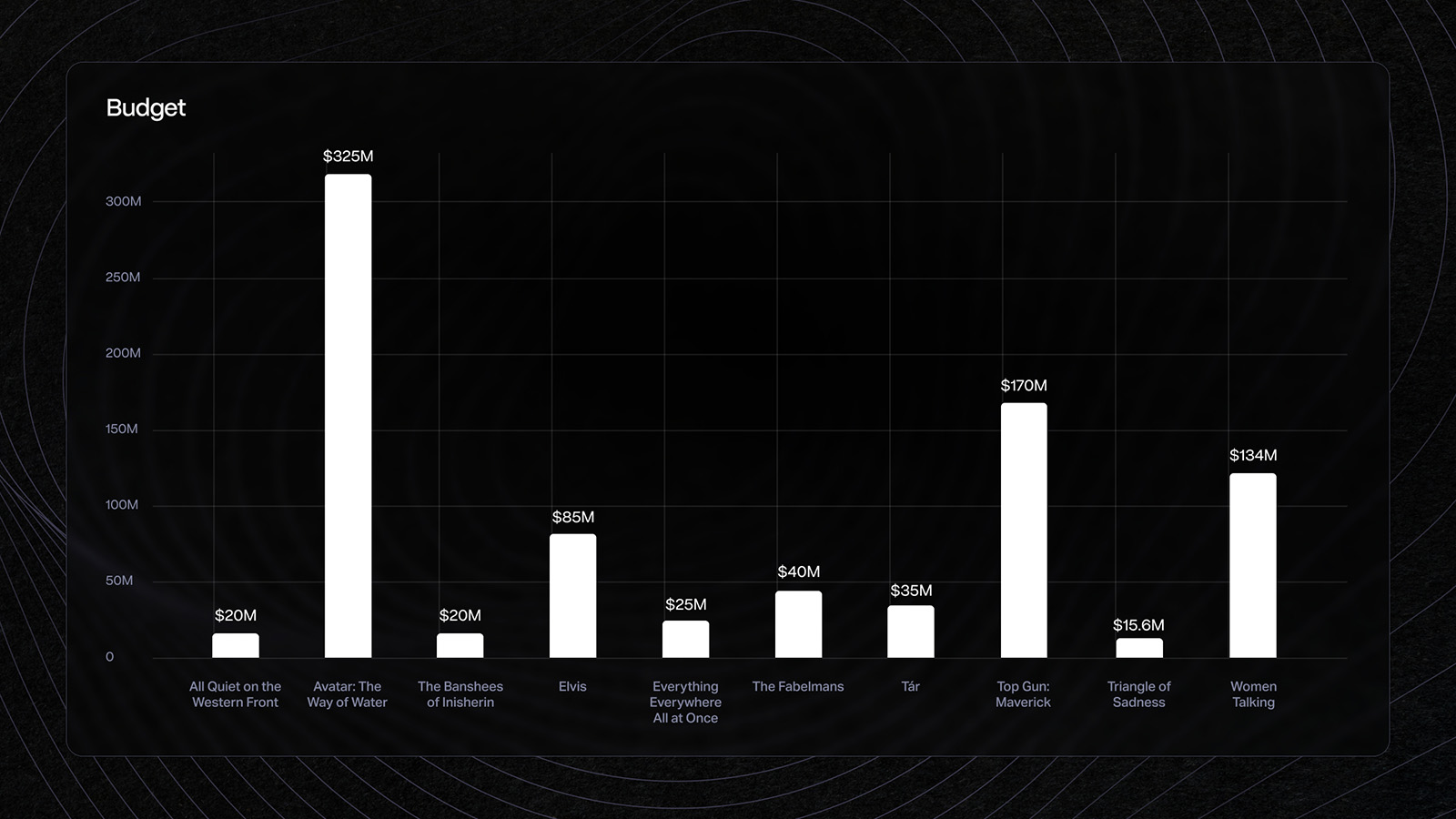

Budgets

Not a news flash, but Avatar: The Way of Water cost nearly twice that of last year’s most expensive film, Dune—and more than quintupled its box office receipts. Meanwhile, Everything Everywhere All at Once proved that a heartfelt story, clever and cost-cutting practical effects, and a scrappy approach can yield both critical and box office success.

If you compare this year to 2021, in which the largest budgets topped out at $35 million and two films cost well under this year’s $15 million, it seems the pendulum has swung as we emerge from COVID. It’s also noteworthy that among the streamers, last year Netflix had three Best Picture-nominated films (The Power of the Dog, Don’t Look Up, and Tick, Tick…Boom) and Apple TV+ took home the Oscar for CODA. This year, only Netflix has a Best Picture nominee with All Quiet on the Western Front. We’ll talk more about the streaming services later on.

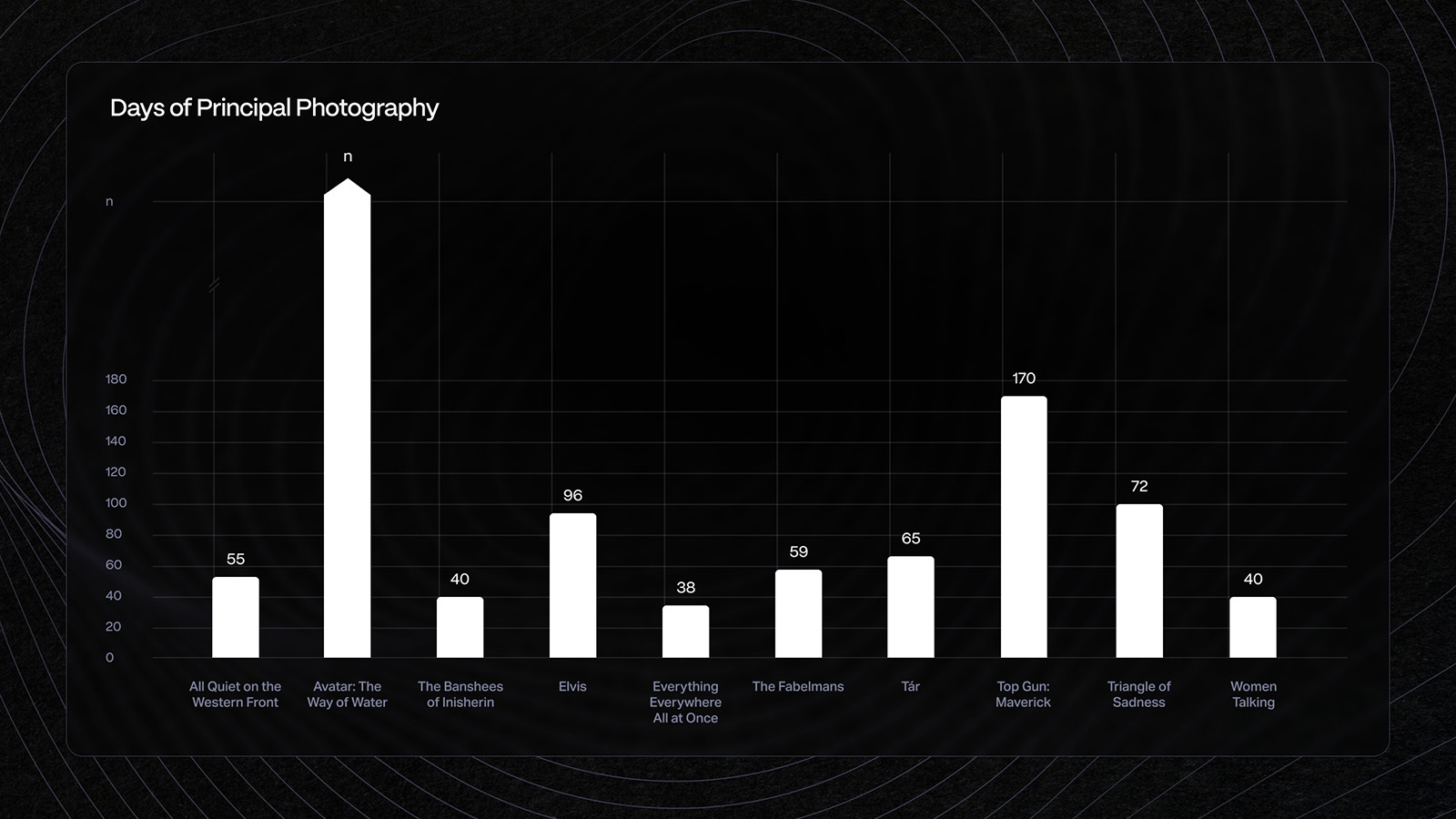

Days of principal photography

As you’d imagine, Everything Everywhere All at Once was the most economical when it came to days spent in principal photography. At a mere 38 days (and considering how many practical effects they did) you have to salute. What’s also of note is that the least-expensive movie of this year’s bunch, Triangle of Sadness, managed to pack in 72 shoot days. Director Ruben Östlund was determined to get as much in-camera as possible, which he did by creating elaborate sets and capturing multiple takes.

Only one film shot on actual film this year—The Fabelmans, using 8, 16 and 35mm, the smaller gauges to more authentically replicate the movies the young Spielberg created.

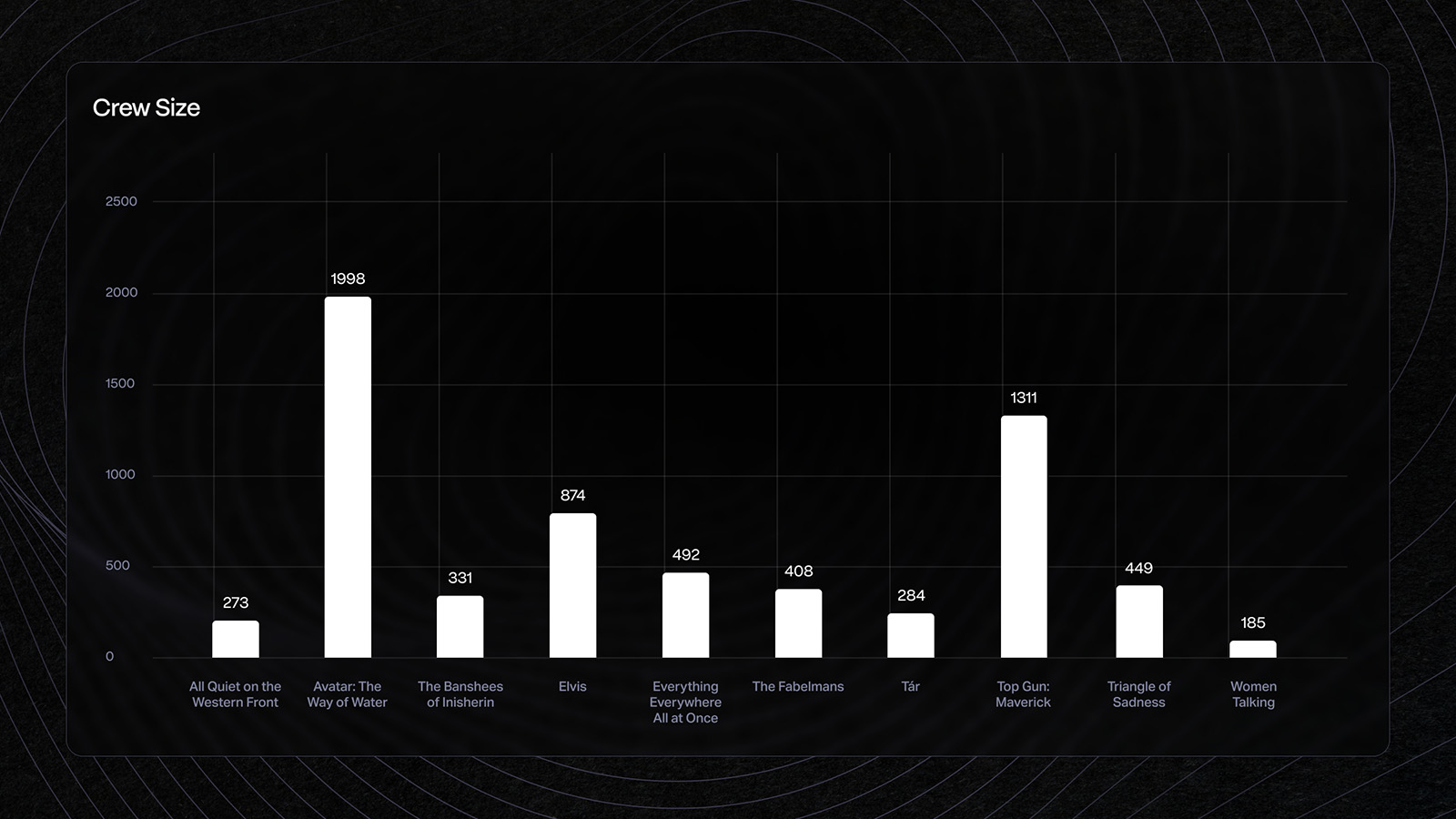

Crew size

Last year, Dune topped the chart with a team of 1,190. But this year, Avatar closed in on 2,000 people. And Top Gun, even (or maybe especially) with all the practical effects, exceeded Dune by a couple hundred.

Compare Everything Everywhere All at Once with a crew that’s roughly a third the size of Top Gun’s, and it drives home the point that it’s possible to do a lot—with a lot less.

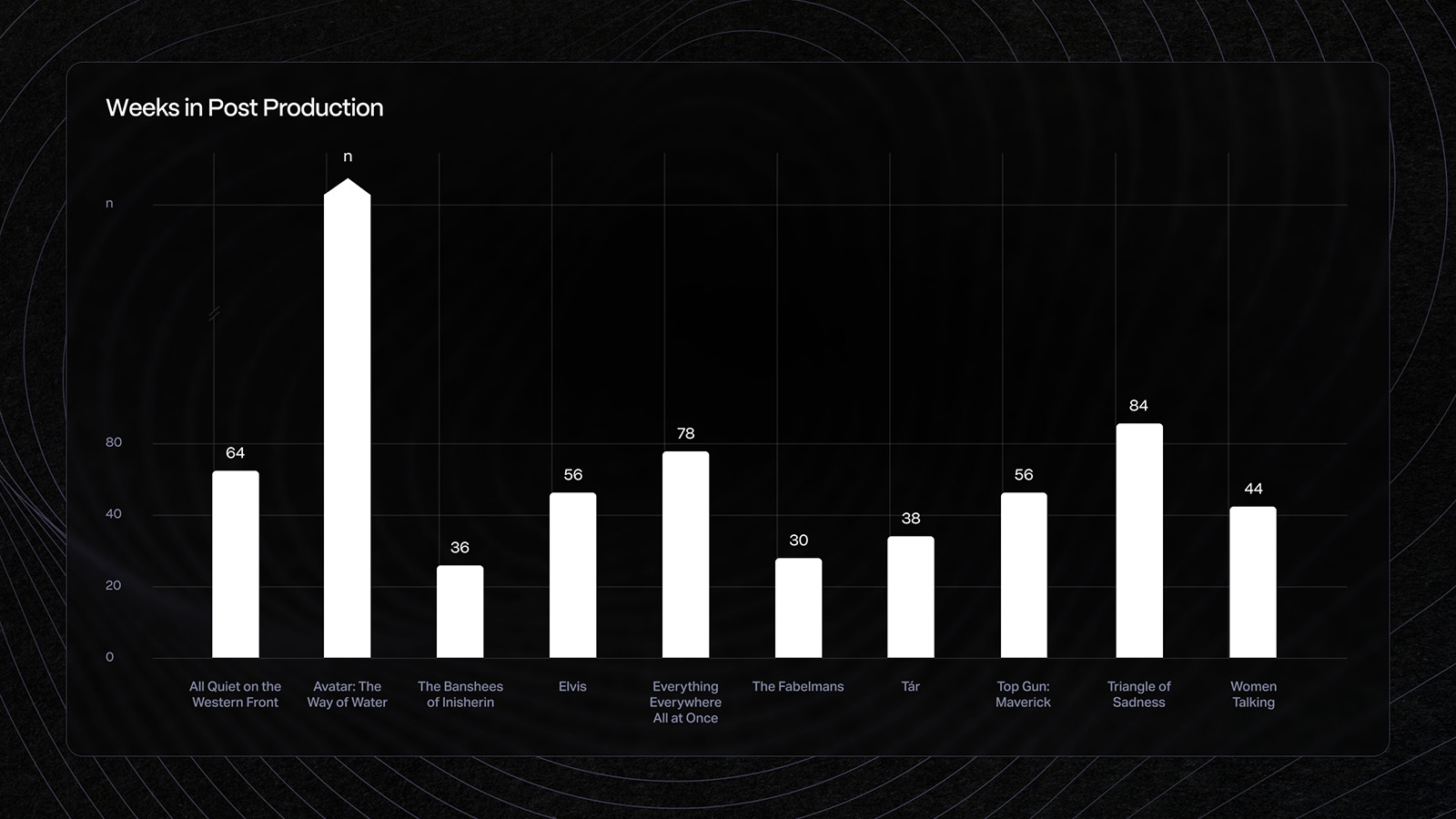

Weeks in post-production

COVID-19 continued to play a part in the post-production process for the films released in 2022, with several teams reporting that they began by working from home and then transitioned to in-person work later (or vice versa).

As was the case last year, some of the films scheduled for release in 2021—notably Top Gun: Maverick—were pushed to 2022. As was the case with Dune last year, the extra time that several teams spent in post was an unexpected gift. On the other hand, the team from TÁR found themselves in isolation and away from their families, while the team from The Banshees of Inisherin grappled with technical challenges.

Common denominators

Given the unpredictability of the industry—because it is, after all, affected by the world at large—it’s maybe been even a little more interesting to spot what emerge as common threads. If there’s one overarching theme, however, it’s perhaps that this year saw a raft of films that took years (or even decades) to bring to the screen.

From the Avatar and Top Gun sequels, the return of Todd Field to feature directing, the reunion of Martin McDonagh with actors Brendan Gleeson and Colin Farrell, and the nearly two-decade push to make All Quiet on the Western Front, to the lifetime it took Steven Spielberg to make a film about his family, it’s clear that making an award-worthy movie is truly a labor of love and a testament to tenacity.

Sequels rule

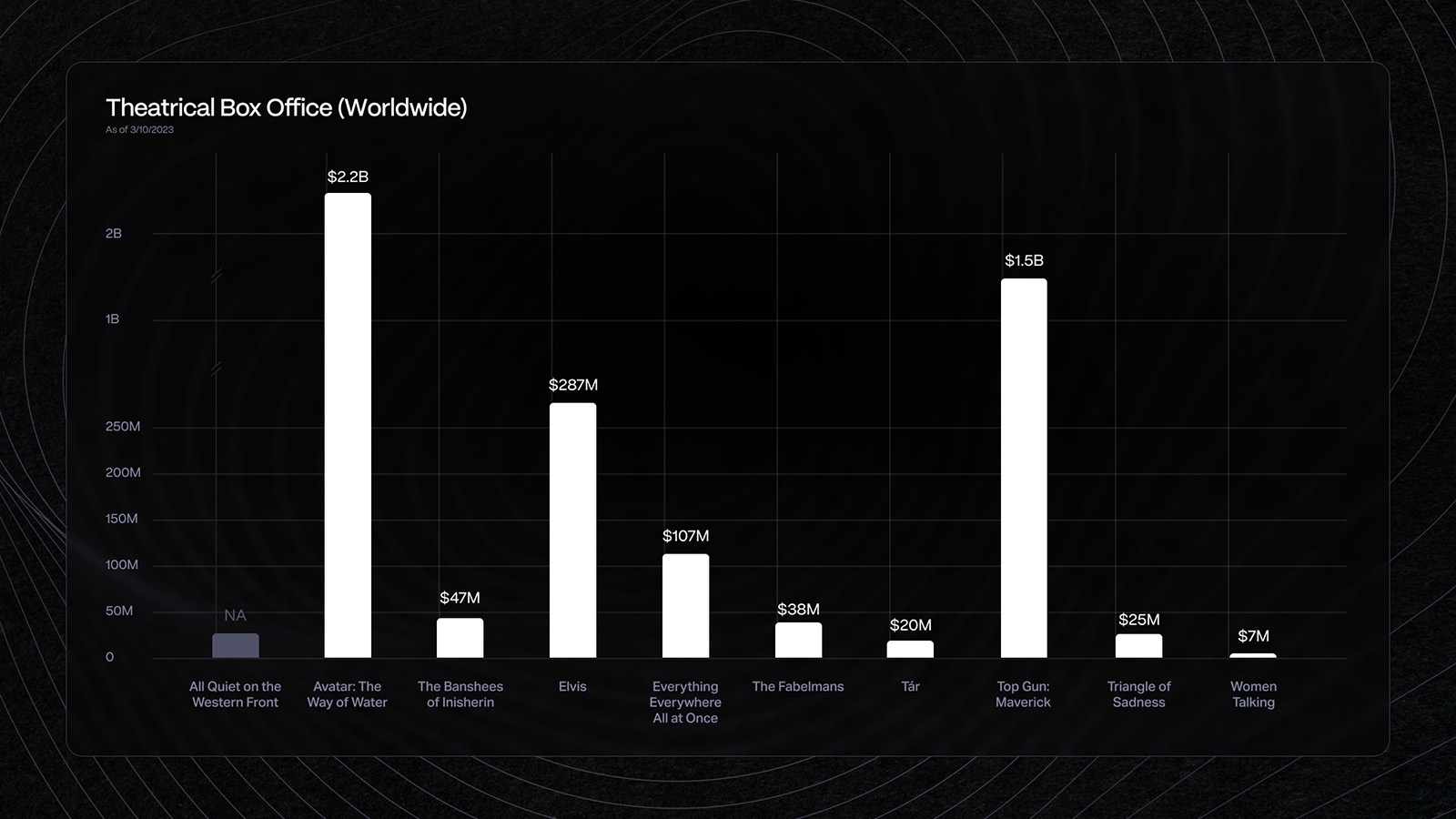

As you might have guessed, the two top-grossing films of the year were the sequels to Avatar ($2.2 billion and counting) and Top Gun ($1.5 billion). In fact, of the ten top-grossing films, only one wasn’t a sequel (China’s Moon Man).

The takeaway? The studios know their audiences and are giving the people what they want. The 2020 reports that movie theaters were dead now seem laughably premature, as audiences have flocked back for the kind of in-person tentpole events they’d missed in 2020-2021. More movies are being displayed with 3D and 4DX experiences, enhanced by environment-appropriate aromas, seats that pitch and yaw, and misting jets to make viewers feel like they’re in the water (fortunately, if you don’t care to be damp while watching a three-hour movie, you can turn your sprayer off).

There are reports that the Black Panther and Avatar sequels may be responsible for pushing IMAX earnings in 2023 to pre-pandemic levels. Even the Cinerama Dome, which closed its doors in 2021, is slated for a comeback this year, while AMC Theaters will begin scaling ticket prices based on line-of-sight to the screen for movies and live-event screenings.

Indies survive…and thrive

Unfortunately, getting people back to theaters for the non-spectacle films has proven to be a greater challenge. Indie darlings Sarah Polley and Todd Field returned to directing after lengthy hiatuses (10 years and 16 years, respectively). But even with the accolades from viewers and critics, Polley’s Women Talking has thus far grossed only $7 million while Field’s TÁR has pulled in $20 million on a reported $35 million production budget. Even Steven Spielberg’s The Fabelmans has only seen a reported $38 million at the box office, despite his blockbuster track record.

But even as A24’s The Whale underperformed at the box office (despite Brendan Fraser’s Oscar win), Everything Everywhere All at Once has already earned more than four times its production budget at the box office, making it the A24 poster child for indie success, and the darling of the Oscars with 11 nominations and seven wins.

Other art-house success stories include The Banshees of Inisherin, which earned nearly twice its reported $20 million budget, and Triangle of Sadness, whose $15 million investment earned $20 million globally.

The movies got longer

Did it seem like there were a lot of long movies this year? Of the ten Best Picture nominees, only two came in at under two hours (Women Talking and The Banshees of Inisherin). The rest? Long—or longer.

Take the sequels. The original Top Gun ran 109 minutes, but its sequel was 130 minutes. Avatar, for which James Cameron once famously ejected a studio exec from his office for questioning its 162-minute TRT, returned with a sequel that’s 30 minutes longer than the original.

Last year, the average length of the nominated movies was 138 minutes (which was 20 minutes longer than in 2021). This year, the average length was 144 minutes—and that’s among only the nominated movies. Other famously long 2022 movies include Babylon, weighing in at 189 minutes, and The Batman at 176 minutes.

Is it that studios speculate that audiences, who are now paying somewhere in the $15-$30 range for premium viewing experiences (3D, IMAX, 4DX), expect to get their money’s worth? Is it indicative of a continuing trend that audiences in recent years welcome lengthier diversions? Or, is it that filmmakers, especially at the top of their game, have more clout?

When you look at the audience ratings of the nominated movies, length seems to have no correlation to audience enjoyment.

Because when you look at the audience ratings of the nominated movies, length seems to have no correlation to audience enjoyment. This year, Top Gun: Maverick sat at the top with a 99 percent audience rating on Rotten Tomatoes, with Elvis at 94 percent and Avatar: The Way of Water at 92 percent. Maybe more is more.

Technology trends

While last year three of the Best Picture-nominated movies were shot on film, this year all but one were captured digitally. By far the most popular cameras were ARRI ALEXAs, followed by Sony VENICE cameras (notably for both the Avatar and Top Gun sequels), along with a smattering of Panavision DXLs, REDs, and Blackmagic cameras added to the mix.

IMAX films were also back in a big way. Jordan Peele’s Nope was the first horror flick to shoot with IMAX cameras, and Top Gun: Maverick, Avatar: The Way of Water, Dr. Strange in the Multiverse of Madness, Thor: Love and Thunder, and many more were captured using either IMAX cameras or IMAX-certified cameras.

As for editorial and post, AVID Media Composer was again the NLE of choice. But this year, Adobe Creative Cloud and Frame.io powered the post-production of Everything Everywhere All at Once, and Premiere Pro and/or Frame.io were used on three of the five films nominated for Best Documentary Feature (All That Breathes, Fire of Love, and the winning film, Navalny).

Worth noting is the increasing popularity of Adobe’s Substance 3D platform which, in addition to garnering an Oscar for Technical Achievement, has been used on blockbusters including Dr. Strange in the Multiverse of Madness. And Adobe After Effects, which figured prominently in the workflow of Everything Everywhere…, was also used in the VFX pipeline for Black Panther: Wakanda Forever and for the design of the HUDs in Top Gun: Maverick.

Making it real

You can’t talk about filmmaking technology without highlighting the lengths (and depths and heights) some of the productions went to in the pursuit of realistic and immersive experiences. In particular, the Avatar and Top Gun sequels could not have been made without the technology having evolved adequately in order to achieve the directors’ visions.

The evolution became a kind of technological revolution, in which both films created breakthrough techniques and tools that will change the way films are made in the future.

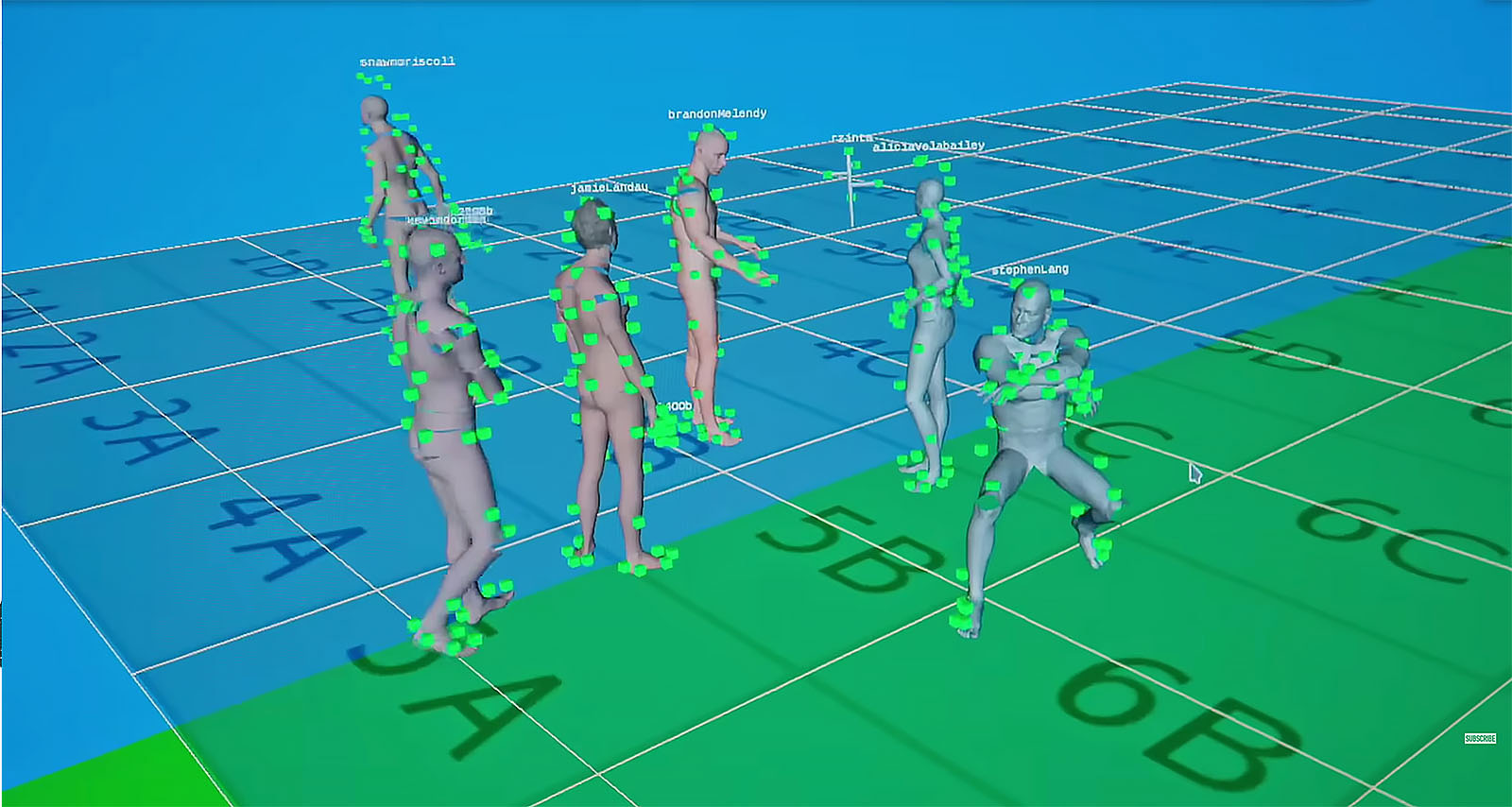

By now, most cinephiles have read about the elaborate performance-capture and virtual-camera production/post-production workflow on Avatar 2. Articles and videos about the lengthy process and complex setups abound, but briefly: the VFX team began developing the technology for the sequel in 2016 to create the elaborate water environments. For more than five years, the editors worked along with the production crew, putting together the live-action performances that would be turned over to Wētā FX to create the CG characters based on them.

Although the actors could have been suspended from wires to mimic swimming underwater (as they were in the first film), James Cameron decided that they needed to actually be submerged in order to ensure that the characters moved realistically. A 900,000-gallon tank was built and the team used a newly developed 3D beam-splitter system that allowed them to shoot underwater without lens distortion.

And the actors? Professional free divers were hired to train them to hold their breath for lengthy durations so they could comfortably perform their scenes without needing scuba tanks—which would create unwanted bubbles and interrupt the action. Kate Winslet even famously broke Tom Cruise’s record by holding her breath for 7 minutes and 14 seconds.

Speaking of Tom Cruise: while the Avatar team was holding their breath underwater, he and his Top Gun: Maverick team took to the skies in actual Boeing F/A-18 Super Hornet military jets. Because of the way the cameras are mounted, and the fact that you can see the effects of G-forces on their faces, the audience feels as if the actors are piloting the planes (they’re not—the F-18s have two seats in the cockpit, so the actors sat behind the pilots).

Cruise, who reportedly wanted to fly the jets himself, put his cast members through a three-month training boot camp to become comfortable with the high G-forces—and also to learn how to turn the cameras on and off. The production yielded just over 800 hours of footage for Eddie Hamilton and team to cull through—a kind of boot camp of its own.

And now, sit down with a cup of coffee, a bowl of popcorn, or an adult beverage. It’s showtime!

The billion-dollar babies

Let’s face it: there’s absolutely nothing easy about making any movie. But making a billion-dollar blockbuster? It’s almost beyond imagination to understand what the armies of artists and craftspeople go through, for literally years, to bring previously impossible stories to life. Ingenuity, endurance, and talent are all essential qualities for anyone who dares to sign up for a production of this magnitude—whether in front of or behind the camera.

Avatar: The Way of Water

Massive doesn’t even begin to describe the undertaking that is Avatar: The Way of Water. Just the numbers alone are staggering: a $325 million budget, 2,000 crew, more than 3,000 VFX shots, 18 petabytes of data…and a reported 800 pages of single-spaced notes that director James Cameron handed to his writers. Oh, and an Oscar for Best Visual Effects.

Avatar arrived in theaters in 2009, which means it took 13 years to complete the first sequel. A Variety article dating back to October 2010 announced the plan for him to direct two more films, with the intention of shooting them back-to-back and finishing them for 2014 and 2015 releases.

So what happened?

Well, first, you have to remember that this is Cameron, and if he’s going to make a movie, you can bet he’s going to be pushing some kind of new technological envelope. When the first Avatar came out, he was using performance capture in a way that had really never been seen. Sure, Robert Zemeckis was doing films like Polar Express, Beowulf, and A Christmas Carol. Those films were intended to look more realistic—as if creating a kind of CG rotoscoping of the actors. Unfortunately, it often had the opposite effect, taking the viewer on a trip to the Uncanny Valley.

Avatar, however, avoided that problem by using real actors to play real humans and reserving the performance capture to create the giant blue aliens. The result was an immersive experience that transported audiences to fantastical places they had never imagined. Which brings us to Avatar 2.

This time, Cameron wanted to take audiences to an underwater world. Not unlike the team behind Top Gun: Maverick needing to see the effects of G-forces on the actors’ faces, Cameron wanted the audience to see the way their skin and hair and costumes would move in actual water. That meant shooting his actors in the giant tank “wet for wet.”

One essential technological breakthrough was the underwater beam splitter system developed by cinematographer Pawel Achtel, ACS. According to his website, DeepX 3D is the world’s first and only submersible 3D beam splitter, offering underwater 3D IMAX imagery without distortion, aberrations, and artifacts. Fitted with vintage Nikon Nikonos submersible lenses, DeepX 3D is small and lightweight (under 30 kg ready to dive) and can be handled by a single person. If you really want to nerd out over the tech, you can see what the 8K association has to say.

Cameron and his frequent collaborator Russell Carpenter, ASC used two Sony VENICE cameras rigged to a specially made 3D stereoscopic beam splitter system, utilizing the Rialto extension unit (which Sony designed especially for Avatar 2). The complete system is called Sony CineAlta VENICE 3D. When you look back at what it took to shoot in 3D a short 30 years ago (as Cameron did for Terminator 2) this new digital 3D capture system is nothing short of revolutionary. In addition to those systems, there were also the double face cameras to capture the movements of the actors’ faces more faithfully, and especially the movements of their eyes.

We’d typically report how many days were spent in principal photography, but with this production it’s a tall order. The best we can do is say that according to IMDb Pro, performance-capture photography began in September 2017 (with both the second and third film shooting concurrently) and went until March 2018. Live-action filming, which began in 2019 but was shut down during COVID, resumed shooting in June 2020 and was completed in September 2020.

Then there were all the 3D tools and technologies necessary to create all the phenomena associated with being under, or on, the water. According to an interview in The New York Times, Wētā FX VFX supervisor Eric Saindon explains that of the 3,240 shots they created, 2,224 involved water—and 57 new species of sea creatures who had to move realistically within it.

In order to accomplish this, they had to become experts not only in hydrodynamics, but also in how to render them realistically. “The proper flow of waves on the ocean, waves interacting with characters, waves interacting with environments, the thin film of water that runs down the skin, the way hair behaves when it’s wet, the index of refraction of light underwater. We wanted to make it all physically accurate.”

One of the biggest challenges was the facial animation, according to VFX supervisor Joe Letteri. The actors, when acting underwater, tended to squint. “The Metkayina characters, which by design are more impervious to being in the water, are more natural underwater,” he says.

The actors were captured by eight cameras out of the water, going through their lines and performances. After years of research and development, Wētā FX came up with a new facial animation tool called APFS (Anatomically Plausible Facial System). Based on 178 muscle fiber curves or ‘strain’ curves that can contract or relax to provide fine-grained high-fidelity human facial expressions, the team was able to very faithfully replicate the nuances of the human performances.

Wētā Digital is now owned by Unity, and some of the tools created especially for the film will soon become available to the industry including products to aid in hair/grooming simulations, water effects, plant manipulation, and simulating gaseous phenomena.

What about the editorial process? It’s complicated, because the team worked iteratively throughout production. Cameron broke it down for IndieWire: “We had four editors who were run of show for five years, two other editors who were in for a year or a couple of years, and then a staff of about a dozen assistants split between Los Angeles and New Zealand. It’s very edit intensive, and the reason is you basically edit the whole movie twice.”

The four main editors included Cameron himself, Stephen Rivkin, ACE; John Refua, ACE; and Oscar-winning editor David Brenner, who tragically passed away shortly before Avatar 2 was released. Both Rivkin and Refua had worked with Cameron on Avatar, for which they earned Oscar nominations.

Stephen Rivkin explained that it began with the dailies from the performance capture of the actors that was captured in “the volume” (which this time included one on the ground as well one in that giant tank). Cameron would select the performances that he was interested in and the editors would assemble those first. Once they were approved, those went to their internal lab where the files were populated with the digital assets—environments, wardrobe, and characters—such that the original performances were driving virtual characters in digital environments.

The next step was to use the virtual cameras, based on those performances, to create the shots and framing that were constructed into scenes for the final movie. Once those were approved, they went to Wētā FX to be fully rendered. They also had to make a template for the live-action characters who appeared in the virtual shots to act as a blueprint for shooting the live-action.

Rivkin describes it as “possibly the most complicated process of moviemaking ever.” Which is still probably an understatement.

Even the number of deliverables that were created is astonishing. We’ve heard that Cameron shot in both 24fps and 48fps (for improved resolution of the 3D during action sequences). In this article in Mixing Light, colorist Tashi Trieu explains the process of creating the 11 theatrical versions for Avatar 2. There’s also a part two, so if you’re deeply interested in the entire grading and finishing process, you’ll want to check out both articles.

Trieu had previously worked on Alita: Battle Angel, produced by Cameron and Jon Landau, which he describes as a “dress rehearsal” for Avatar 2. Working out of Park Road Post in New Zealand, Trieu spent three months finishing Avatar 2 using Resolve plus NukeX. He chose Resolve because “the database performance improvements made simple save/load operations faster than on previous projects. We started on Resolve 17 and migrated up to 18 during the project because I wanted to take advantage of large-project database optimizations in 18.”

“We ultimately finished on 18.0.2. Now, we can maintain multiple timelines within a single project without much overhead. Versioning and maintenance between derivative grades is a lot easier and requires a lot less media management and manual work from DI editorial. We leveraged Resolve’s Python API heavily, and I wrote several scripts that accelerated our shot ingest and version checking so we could rapidly apply reel updates as soon as new shots were ready.”

“This is critical on a big VFX film, particularly one where virtually every shot is VFX and tracked accordingly. I wrote scripts for automatically loading in shots from EDLs, comparing current EDLs with new shots on the filesystem, and producing different EDLs for rapid change cuts and shot updates.”

The final list:

- DolbyVision 3D 14fL 48fps 1.85

- DolbyVision 3D 14fL 48fps 2.39

- DolbyVision 2D 31.5fL 48fps 2.39

- IMAX 3D 9fL 48fps 1.85

- Digital Cinema 2D 14fL 48fps 2.39

- Digital Cinema 3D 14fL 48fps 1.85

- Digital Cinema 3D 14fL 48fps 2.39

- Digital Cinema 3D 6fL 48fps 1.85

- Digital Cinema 3D 6fL 48fps 2.39

- Digital Cinema 3D 3.5fL 48fps 1.85

- Digital Cinema 3D 3.5fL 48fps 2.39

Trieu also mentions that they graded in 3D the entire time using the glasses and that the final 3D HDR experience is, in his opinion, “the best 3D that’s ever been made.”

If the first Avatar was designed to transport us to a different world, Cameron and his team took the next step with Avatar 2 to transport us to a different world photorealistically. With Avatar 3 currently set for release in 2024, we’ll just have to be patient to see what he has in store for us next!

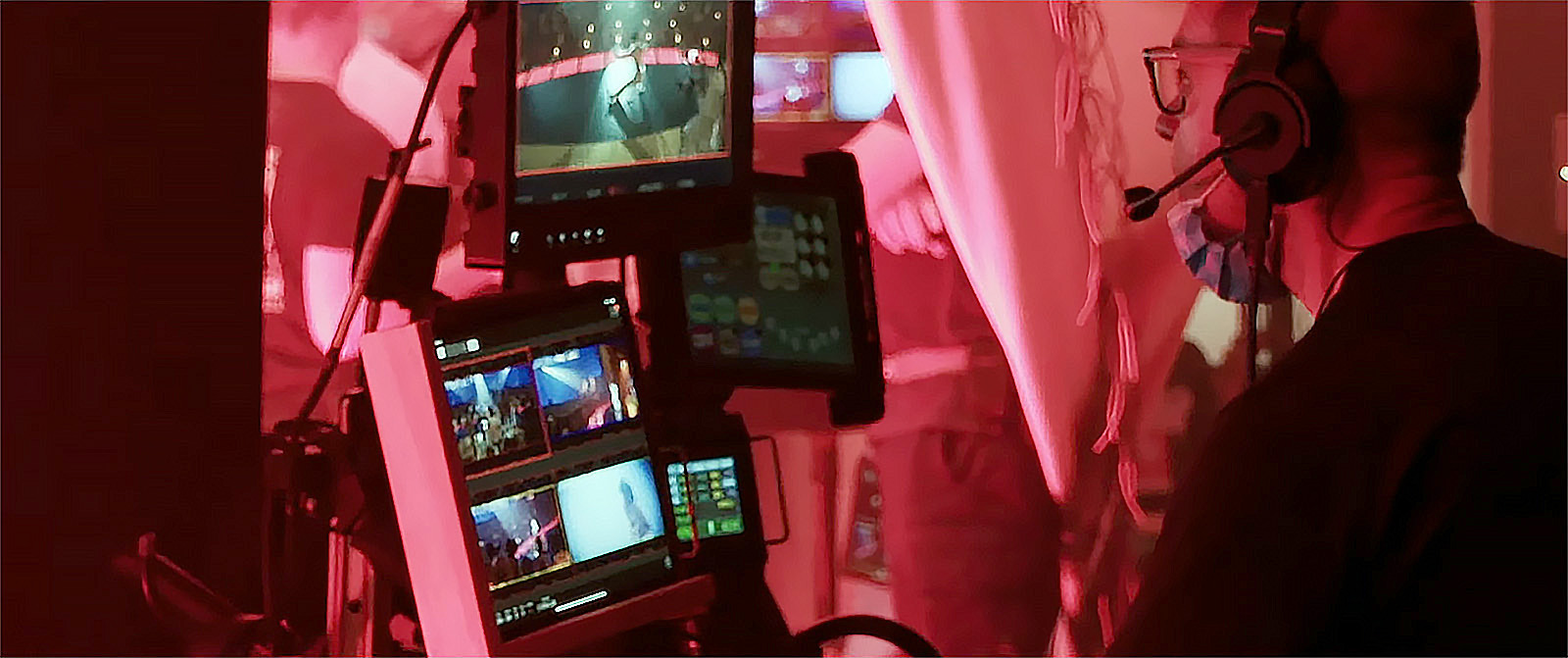

Top Gun: Maverick

Oscar-nominated Eddie Hamilton, ACE, is in many ways the TOPGUN pilot of editors. Not only does he constantly push the envelope technologically, he is also incredibly hardworking, dedicated, and has put in tens of thousands of hours honing his craft. It’s why he’s become Tom Cruise’s editor of choice, having worked with him on two Mission Impossible films, as well as on the two upcoming movies. The work ethic that Eddie and Cruise share pervades his entire team, a veritable squadron of professionals whom he graciously credits for keeping post-production on course as he focuses on cutting.

Eddie’s LA-based first assistant, Matt Sweat, explained the complex workflow. With challenges ranging from 814 hours of footage to COVID lockdown, you could say that bringing this film to the screen is no less a feat than flying a jet at 1200 mph and not passing out from the G-force.

First, you have to talk about the timeline of a highly anticipated sequel that arrived in theaters 36 years after the original, and beloved, Top Gun. Production began in May of 2018 and the film opened on Memorial Day weekend 2022. While it’s true that it led to a delayed release, the part that COVID played might also have contributed to Maverick’s success by allowing a drumbeat of anticipation to build as the world came to terms with “opening up.” By the time Maverick landed, audiences worldwide were beyond ready and excited to experience all that it promised—and delivered—to the tune of $1.5 billion at the global box office (even without a theatrical run in China).

So what did they do during those years—and how did they do it?

Oscar-winning cinematographer Claudio Miranda, who had previously worked with both Cruise and director Joseph Kosinski (on 2013’s Oblivion) had a massive task preparing for, and executing during 170 days of principal photography. Shooting on the ground, in the air, and on the sea on the USS Abraham Lincoln and USS Roosevelt, locations included Los Angeles, Lake Tahoe, and San Diego, and naval bases in Fallon, NV, Lemoore, CA, and Whidbey Island, WA. Preparation was, of course, lengthy, critical, and detailed.

Slated for an IMAX release, the crew used Sony CineAlta VENICE IMAX-certified cameras. For the cockpit scenes, they had three standard Sony VENICE units and three equipped with the Rialto system. The “typical” shoot on-the-ground setups would run between two to four cameras. However, there’s nothing typical about a film like this. On one particular day, where four jets were in the air at once and two units were filming on the ground, they had an astonishing 27 cameras.

The list of lenses Miranda chose: Sigma FF High Speed PL mount primes, Master Primes from 65mm and longer to cover Full Frame, Voigtlander and ZEISS Loxia E-mount primes in the cockpit; 28-100 FUJINON Premista FF zooms; FUJINON Premier 18-85, 24-180, and 75-400 zooms with IB/E Optics Extenders; Canon 150-600 (FF modified still lens). The aerial and Shotover unit used 20-120, 85-300, and 25-300 FUJINON Cabrios.

Footage was captured at 24fps in 6K full frame 17:9 resolution (6054 x 3192) and at 4K (4096 x 2160) in X-OCN ST. Stefan Sonnenfeld at Company3 graded, and final delivery was at 4K for 2.39:1 and 1.9:1 (for IMAX).

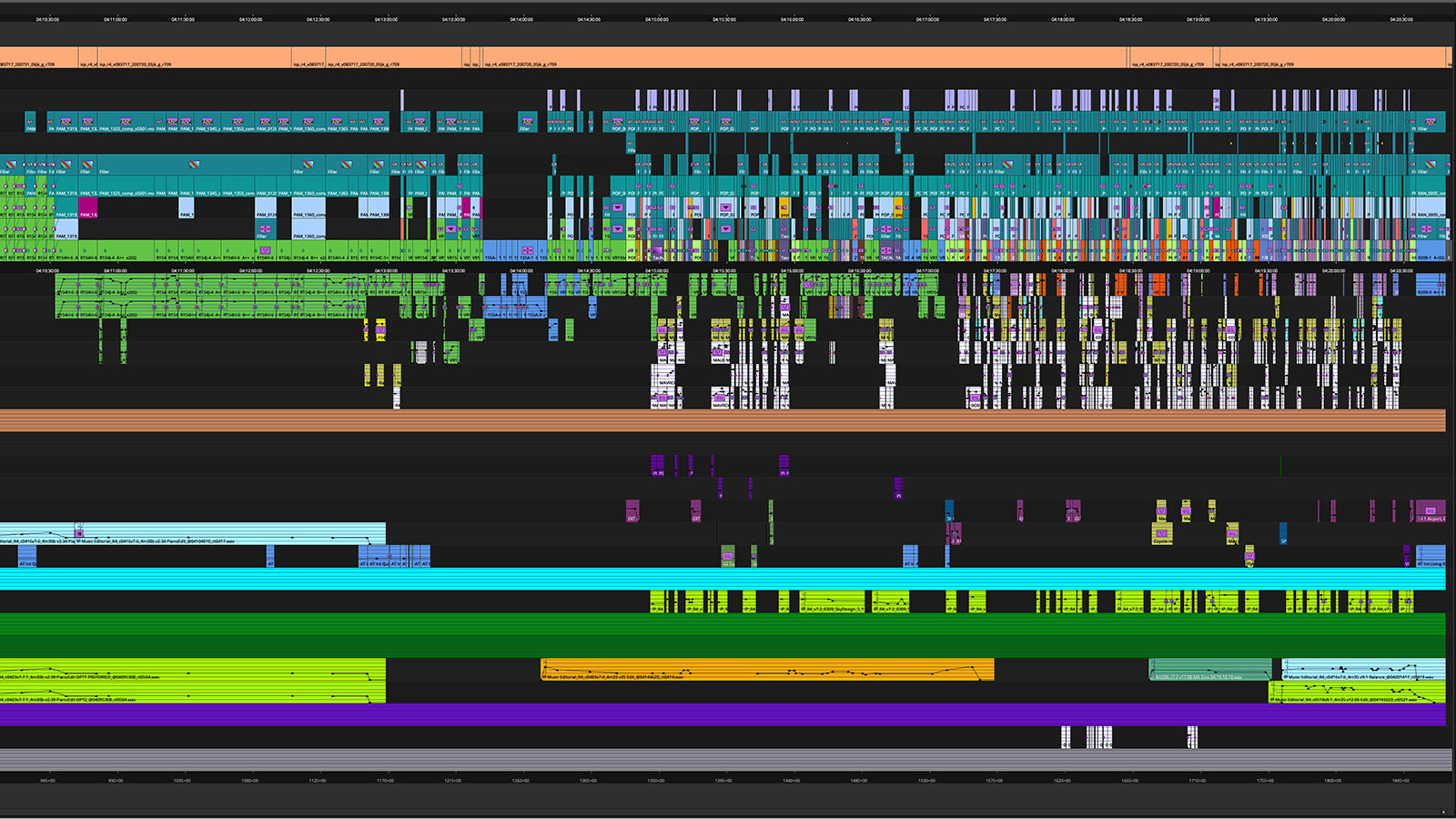

The team worked in AVID Media Composer in DNxHR LB (UHD), with media stored on an 80TB Nexis. Matt explains that at their maximum crew size, they had 13 AVIDs connected to the Nexis, as well as another seven AVIDs connected for the editors who were cutting all the trailers and BTS content.

During the first year in production they had one cutting room at Jerry Bruckheimer Films in Santa Monica, as well as a remote edit trailer equipped with 80TB of portable drives. There was one editor with two assistant editors on set, while two or three assistant editors worked out at the cutting room.

As for the dailies process, there were two distinctly different workflows for principal photography versus aerial photography. “For principal photography, AVID dailies were automatically downloaded each morning via Aspera. Once ingested into the AVID, a roll of that day’s dailies was quickly created for Eddie to view. Dailies were synced to production audio by assistant editors. Multicam dailies would be grouped and scene bins would then be created,” Matt says. “Once scene bins were created, line breakdowns in script order were created for each scene, which allowed Eddie to quickly scrub through all takes of a specific line reading.”

For the aerial dailies, having the editing trailer on location was clutch. As soon as the planes landed, the DIT transcoded low-resolution footage immediately after debriefing (when the director and Miranda were able to first view what had been shot, since neither were in the air), and that was sent directly to the editorial trailer. Eddie and the assistant editors were able to view and mark up dailies within one to two hours. The low-res clips were then relinked to the higher resolution media when the team received their hero dailies 24-48 hours later.

Additional editor Laura Creecy traveled with the aerial unit to organize the hundreds of hours of air-to-air and ground-to-air footage. Her role was to break down all the aerial footage and assemble the flying scenes. Footage was organized by scenes and story beats, character, and footage type for ease of use. According to Matt, it was somewhat atypical to most dailies workflows, but was extremely useful for the needs of this film.

Post-production commenced in July of 2019 and went until September of 2020. At first, they were all in LA, including editors Stephen Mirrione, ACE (who won his Oscar for Traffic) and Chris Lebenzon, ACE, who edited both the original Top Gun and Days of Thunder. In September of 2019, Eddie traveled back to London for the remainder of the film. The team created a second Nexis to mirror the LA Nexis, and passed new media back and forth via Aspera at the end of each work day—which required meticulous media management.

Having this workflow already in place made the shift to working from home less painful when lockdown occurred in March of 2020. At that point, they gave another set of media to VFX editor Latham Robertson, while the rest of the LA crew remoted into the AVIDs that were housed in the cutting rooms.

About those VFX: just because the actors were actually in the air rather than shot against green screens doesn’t mean that there wasn’t a significant VFX load. Unlike Avatar 2, where the VFX are on full display in every frame, the trick for Maverick was to make the approximately 2,400 shots that had to be integrated with practical aerial footage look invisibly seamless. Whether they were creating the Darkstar or making the too-dangerous-to-execute possible, it took a lot of work and coordination to achieve the Oscar-nominated outcome.

The visual effects team, led by VFX supervisor Ryan Tudhope, worked closely with the camera department, aerial coordinators, and the United States Navy to film extensive air-to-air and ground-to-air footage of real jets. Plates always had a real aircraft in them even if it wasn’t the specific type of military jet for the story. With that as the guiding principle, those 814 hours of footage included aerial stunts, mounts, and plates to provide the editorial team with a practical foundation for visual effects the story required.

The team’s attention to detail was unsparing. “When it came to shot work, we immersed ourselves in maneuvering tactics, control surfaces, instruments and HUDs, weapon’s characteristics, and hypersonic flight,” Matt says. “Many shots entailed replacing real military aircraft with completely different models and designs. Several sequences pushed the limits of performance or safety and required shots to be created entirely digitally from photographic source material.”

The fact that most of the team was in LA but Eddie was in London meant that Latham could work with the reels for VFX during his work day, and would then pass them to Eddie at the end of his work day so Eddie could begin work at the start of his day. There was even one occasion—Matt cites a last-minute reshoot in London that was only a few weeks prior to the first audience preview—that required the LA crew to process dailies during the night so that the UK editorial team would have footage the next morning.

Obviously, a show this huge and technically complex is going to have its share of challenges. Even with a crew of 11 (three editors, one additional editor, three first assistant editors, one VFX Editor, one second assistant editor, one apprentice editor, and one editorial PA) dealing with the mountain of footage, and making sure they had adequate storage and sufficient redundant backups was just the first and most obvious.

But what’s most challenging about a project can also become what’s most rewarding. According to Matt, “Probably the most rewarding part was creating new workflows to counter the challenges. As we were often spread thin, it necessitated that our entire crew help each other and be proficient in tasks that we didn’t normally manage. This was a film where editorial was usually present on set. Consequently, we interacted more with the cast, production crew, and U.S. Navy personnel, leaning on each other for specific tasks. Like so many films, we needed to be nimble in adapting to specific needs. This particular film just seemed to require it more than usual.”

Even beyond the aerial on-set needs, many of the shoot days required either the editor or an assistant editor there to show the edit reference or dailies for shot-specific composition. Matt cites one example of a pickup shoot at Paramount, where Eddie and two assistant editors were on set. Eddie would immediately implement the video tap files into the edit in order to confirm that the footage worked before the director would move on. Additionally, they were able to provide advice and valuable input on specific insert shoots.

Another fun contribution was that because the pilots had masks on, it allowed them to try out new dialogue. “Picture editorial would record scratch ADR with the actors on set, setting up a recording station nearby. We worked with the ADs to find actors’ availability, as well as with the production audio crew for recording equipment,” Matt says. It’s no wonder that the team won for Best Sound Editing.

This felt like a once-in-a-lifetime experience.

In the end, the hard work paid off for everyone. “It’s Top Gun: Maverick,” Matt says. “This felt like a once-in-a-lifetime experience. We are all extremely proud to have worked on a film that so many people loved, as well as one that was a technical feat.” Fittingly, it also earned Eddie Hamilton an ACE Eddie.

We thank Matt and the entire team for giving us a glimpse into the rigors of creating a movie that thrills both audiences and industry insiders.

- Eddie Hamilton, ACE – editor

- Chris Lebenzon, ACE – editor

- Stephen Mirrione, ACE – editor

- Laura Creecy – additional editor

- Matt Sweat – first assistant editor (LA)

- Tom Coope – first assistant editor (UK)

- Joseph Kirkland – first assistant editor to Chris Lebenzon

- Patrick Smith – first assistant editor to Stephen Mirrione

- Latham Robertson – VFX editor

- Travis Cantey – second assistant editor

- Tom Pilla – second assistant editor

- Emily Rayl Russell – apprentice editor

- Dawn Marquette – assistant VFX editor

- Wilson Virkler – editorial PA

- Sean Carlsen – editorial PA

- David Hall – post-production supervisor

- Tien Nguyen – post-production coordinator

The indie dramedies

Who doesn’t love a movie that makes you laugh and cry? The Banshees of Inisherin, with its themes of senseless civil war and the significance of legacy, is set against the bucolic backdrop of rural 1920s Ireland. The church and the pub sit at the center of the quirky town, and miniature donkeys are much-loved pets who graze at the dining table.

Meanwhile, Everything Everywhere All at Once, despite its hilarious genre-spanning action and over-the-top performances, has caused many a viewer to sob uncontrollably over its themes of familial relationships and acceptance. Hot dog fingers and googly eyes aside, this indisputably original film is a thrill ride with a heart of gold.

And what do you get when you combine Woody Harrelson, a luxury cruise, and a shipwreck on a desert island? Triangle of Sadness is this generation’s Swept Away—with less romance and a whole lot more vomit—but the chasm between the haves and the have-nots is a story as long as human history itself.

The Banshees of Inisherin

Of all the films we’ve covered, this one somehow feels the most intimate. The story, which chronicles the unraveling of a lengthy friendship, is filled with the kind of performances that contain multitudes—and have earned all the main actors Oscar nominations. From the closeups of the actors’ eyes to the subtlest facial tics they convey a range of emotions that go from nice to…decidedly not nice.

What’s most remarkable about The Banshees of Inisherin is the delicacy with which the entire production is handled—perhaps especially the editing. At a time when so many movies feel overly cutty, editor Mikkel E.G. Nielsen (who earned an Oscar for Sound of Metal and was nominated for this movie) allows us to linger with the characters as they process their emotions, to absorb the majesty of the locations, to anticipate where the story will take us, and to savor the journey.

Writer-director Martin McDonagh (who took home the BAFTA for Best Original Screenplay as well as the BAFTA for Outstanding British Film of the Year) once again cast Brendan Gleeson and Colin Farrell, getting them back together 14 years after In Bruges. Let’s just say it’s been worth the wait.

Sadly, McDonagh’s longtime editor, Jon Gregory, was unable to rejoin the team, as he tragically passed away at the beginning of the shoot. It was a blow personally and professionally for much of the team. As assembly/first assistant editor Nicola Matiwone has shared, when Nielsen took over as editor he very intentionally committed to honoring Gregory throughout the edit.

To capture the beauty of the Irish locations of Inis Mór and Achill Island, cinematographer Ben Davis, BSC (who previously collaborated with McDonagh on Three Billboards Outside Ebbing, Missouri) chose ALEXA Mini LFs shooting 4.5K OG ARRIRAW for his main cameras. He used two cameras for the main unit with an additional body for the DJI Ronin gimbal work.

He also chose a Blackmagic Ursa Mini 12K to shoot additional B-roll in 8K BRAW, a RED EPIC DRAGON drone shooting in 6K RAW, and a DJI X7 shooting 4K cinemaDNG. Principal photography took 40 days and judging from the way the cast and crew have spoken about it, it was one of those memorable shoots that everyone felt privileged to be part of—even if Barry Keoghan did eat Colin Farrell’s crunchy-nut corn flakes while they were rooming together.

Notably not on location, however, were Nielsen and Matiwone, who were in Copenhagen and Kent, England, respectively. Second assistant Calum Abraham was located in Wales.

“Dailies were created at a near-set digital lab, which ingested from the camera media, archived to LTO, created editorial media, and performed metadata entry and audio syncing,” she says. “Dailies grading was either performed locally at the lab, or remotely from London when internet allowed.”

Shooting in such remote locations—with a remote editorial team—definitely presented challenges. “Because the internet was very sketchy at times it sometimes caused delays, particularly on the odd day that we’d get five hours of rushes,” Matiwone says.

The team worked on AVID at DNxHD 36 to help minimize download time, although for certain scenes the compression was too much and they worked at DNxHD 115. During principal photography they used the Hireworks Connect sync box and then AVID Nexis during post-production.

Matiwone was largely responsible for putting together the assemblies during production, which they would upload for McDonagh each day. Again, if the internet connectivity was unstable, they sometimes sent bins directly to the lab to playback for him. Meanwhile, Nielsen’s preferred process is to take what’s been shot and string the takes together, one after the other, so he can thoroughly watch through all the coverage before he begins cutting. He feels that his time is better spent being away from set and not giving opinions about what’s been shot until he’s had the chance to really absorb the material.

After production wrapped, the team worked together in London, where McDonagh was based, from November to August 2021. Nielsen talks about the experience of working closely with McDonagh in his interview on The Rough Cut.

He describes McDonagh as being “very prepared,” coming in with detailed notes about certain takes or performances he especially liked. The two found their way through the rhythm of the film together, which required balancing the comedy and the darker aspects of the story and letting the unexpected twists play out as if the characters and the audience are discovering them simultaneously.

Of course, much of that is already baked into McDonagh’s script, which Nielsen says didn’t change structurally—although they were always mindful of what could be removed in a “sometimes less is more” way. At only 114 minutes, the action clips along despite the fact that the cutting feels almost leisurely.

The combination of the vast, mystical environment, the shrouded crone who functions as a harbinger of doom, and the many animals who are equally important characters to the humans, make the movie feel like what Nielsen calls a fable or fairy tale. Which they actually didn’t rely heavily on VFX to enhance. There were approximately 240 shots, mostly to paint out present-day objects that would disrupt the 1920s setting. And, yes, for Brendan Gleeson’s character (no spoilers here…you’ll have to watch).

But that rainbow at the beginning? As real as Jenny the donkey who, by many accounts, was the diva of the production, requiring her own emotional-support donkey and substantial bribery to hit her marks.

Certainly Carter Burwell’s unconventional score creates another layer of magical beauty. As a frequent collaborator of McDonagh’s, dating back to In Bruges, he initially proposed using traditional Irish music—which McDonagh rejected. Instead, McDonagh came back to him with a variety of distinctly non-Irish music: a Bulgarian choir, an Indonesian gamelan, a Brahms piece. Burwell describes his epiphanic moment while reading a Grimms’ version of Cinderella to his daughter, in which the stepmother has the daughters cut off bits of their feet to fit into the glass slipper.

“I began to look at Martin’s story…as a fairy tale, and that perspective informed a lot of the writing and instrumentation: celesta, harp, flute, marimba, and gamelan. Sparkly, dreamy music that matched the beauty of the island but also distanced one from the brutality of the physical action. As the score neared completion we finally got to the central montage, which had always been the Indonesian piece.”

“We jettisoned that piece and used a theme I’d written for the relationship between Farrell and Gleeson’s characters. So the gamelan piece is no longer in the final film, replaced by my composition ‘My Life Is On Inisherin.’ But the gamelan’s influence is a ghost living on in the score,” Burwell says.

In the end, the small team produced a movie that beautifully haunts the viewer, and has seemingly had a profound impact on the filmmakers, as well. Matiwone describes the loss of Gregory as the team’s biggest challenge, but acknowledges the support provided by Searchlight (particularly producer Graham Broadbent and the wider Banshees family) throughout the production. She also recounts how honorable Nielsen was in his approach to the work—especially since he stepped in so quickly after Gregory’s passing. Apparently they worked together so well that they’re now collaborating on their next project together.

Which is to say that working with a great team can produce a kind of magic all its own.

Everything Everywhere All at Once

Who doesn’t love seeing a mid-budget indie blast off like a rocket ship? We sure do, especially because Adobe and Frame.io had a hand in helping them craft the film that has captured audiences and awards alike. Directors Daniel Kwan and Daniel Scheinert (aka Daniels) have taken home the Oscar and the DGA Award; editor Paul Rogers won the Oscar, BAFTA, ACE Eddie, and BFE Cut Above awards for his work; the AFI awarded it Movie of the Year; it was awarded an Oscar for Best Original Screenplay, and it took the Best Picture at the 95th Academy Awards. Clean up on aisle nine!

So how did a small group of longtime friends and collaborators turn a “family drama that gets interrupted by a sci-fi film that gets sidetracked by a romance and in the end it’s really just about a family trying to find each other” into the year’s surprise hit? Paul Rogers, along with assistant editors Aashish D’Mello and Zekun Mao were happy to share.

First, it’s worth noticing that the core editorial team was only “just” Paul Rogers along with the two assistants—although even the Daniels themselves worked on the edit. Next, the more than 500 VFX shots were mostly handled by a group of five people who’d crossed paths with Daniels throughout the years as filmmakers, working on short films and music videos together. And, of course, most of the post-production on the film took place during COVID, so instead of being in the same building they were all working together—from home.

A combination of flexible technology, a hands-on approach, a deep appreciation for absurdity, and a lot of heart all contributed to the success of this one-of-a-kind movie.

According to Aashish and Zekun, they shot on ARRI ALEXA minis, with the raw footage captured in ProRes 4444 format at a variety of frame rates including 23.98, 47.96, 59.94, 96, and 192 fps. There were also multiple aspect ratios used, such as 1.33:1, 1.85:1, 2:1 and 2.39:1. Fun fact: cinematographer Larkin Seiple had wanted to capture the rock scenes in IMAX but reason prevailed. Still, you have to love that he wanted to do it because it would have been “the silliest use of IMAX in the history of IMAX.” And that would be saying a lot, since Jordan Peele captured some pretty silly stuff on NOPE.

The team had a very brisk 38 days of principal photography in and around the LA area, followed by seven days of pickups, which were a combination of green screen and on-location days, some from as far away as Paris, where Michelle Yeoh was after principal photography had wrapped.

The on-set DIT was responsible for transcoding the footage to ProRes 422 LT 1998×1080 for editorial in Premiere Pro, and the team received dailies twice a day. Working from Parallax Post-Production in Hollywood during production, editorial would sync sound with the proxies, creating multi-camera clips where necessary and applying speed or aspect ratio changes. They organized the dailies by scenes, highlighting circle-takes and adding English subtitles if required.

Post-production began toward the end of January 2020 with the directors, editorial team, and VFX team working from home beginning in March 2020. As they approached the picture-lock stage, the directors and editorial team moved back into the Parallax Post-Production office and the movie delivered in mid-August 2021.

According to Aashish and Zekun, at the beginning of editorial, Adobe provided them with an early version of Productions in Premiere Pro. “This greatly helped our remote workflow as it reduced the size of each Premiere project file, and made it much easier for us to share sequences and incorporate them seamlessly into everyone’s individual project files,” they state. Note that the Daniels were hands-on throughout the process, whether in editorial or in the VFX workflow (which relied heavily on After Effects), so having easy access, especially while working remotely, was vital.

Paul describes the process in a previous interview. “Productions gives you a project with folders, and each folder is its own project that can be shared and opened by anybody as long as they are keyed into that production. You don’t store your media in your main project, which means that your project isn’t bloated. It’s quick to save. It’s quick to open,” he says.

“The way that we work and the way that our company Parallax has always worked, and the way that I’ve always worked with the Daniels is incredibly collaborative, passing sequences back and forth. Whatever it takes to make the film better, whoever wants to take a crack, go for it. Productions is the first time that we’ve been able to do that without having to email a project file or make a whole new project for somebody. So basically it just allowed Daniel to jump into my sequence and see live what I was doing. I could just hit save and he could jump in and he could see exactly what I’d just done 10 seconds before.”

I could just hit save and he could jump in and he could see exactly what I’d just done 10 seconds before.

Paul also describes the value of working in Premiere Pro to achieve the many “hidden” effects he enjoys doing. “I love to split the screen and combine performances or just change the timings between actors, make someone react or speak over a line versus waiting their turn. And I love to be able to do that in a two shot or a wide shot, but that’s just not how the scene plays out,” he says.

In some cases he didn’t even need to alert VFX supervisor Zak Stoltz to the fact that he had done that. “When they got into finishing there were about 30 VFX shots that he wasn’t aware of. There are all these little split-screen things or changing an extra out in the background, or even a prop that was placed better in one shot than another, so I would throw that in. I can temp together VFX in Premiere in a heartbeat.”

Paul also did numerous speed ramps and changes in Premiere Pro. “We were doing so many fight scenes and a lot of crazy multiverse transitions, having something go from 100 percent speed to 136 percent back down to 115 percent for a split second and then up to 200 percent and back down to 100 percent without it feeling jumpy with a push-in and the aspect ratio changing. We were doing that on the timeline. I got really hooked on time-remapping keyframes as opposed to a universal change.”

How did the assistants deal with that for finishing? “In order to prep the edit for coloring in the most accurate and precise way, we created a specific workflow for the online process—relinking to all the raw media within Premiere and leaving all the resizes and speed changes untouched,” Aashish and Zekun recount. “The full film was rendered to 4K DPX files, which were directly sent to the color house.”

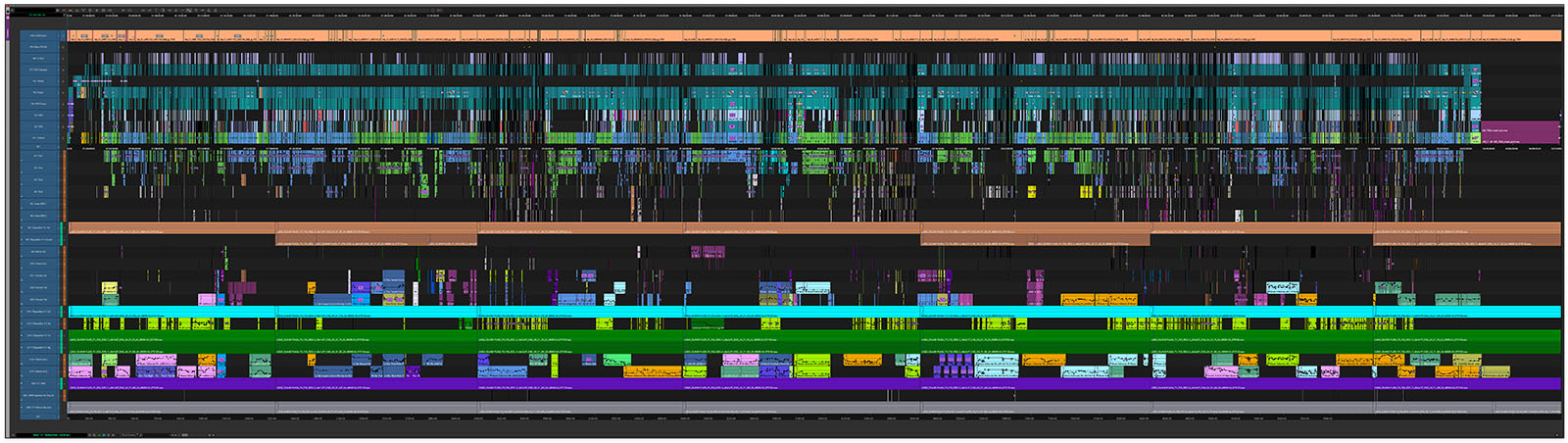

Premiere Pro was also invaluable for sound design, which Paul did rather extensively, given that the sound was so integral to helping the audience experience Evelyn’s shifts to different realities. In some cases Paul had as many as “60-track monstrosities of a timeline, layering stuff, and doing this insane color work,” as well.

In addition to the effects that Paul did himself in Premiere Pro, there were plenty of practical effects—even beyond those famous hot dog finger gloves. The crafty process they devised for Michelle’s transitions through the multiverse were a combination of some live-action timelapse footage Daniel Kwan captured walking down streets that was projected onto a couple of low-budget LED screens with Michelle sitting against a green screen.

Other practical effects included such high-tech gear as leaf blowers and wheel barrows. It’s all admirably clever and, as the directors tell it, cost effective (DIYers take note: there’s lots of great stuff in this video, and the Daniels want you to be inspired by what they’ve done.)

What about those 500 computer-based VFX shots? Well, as mentioned, they were handled by a handful of self-taught filmmakers rather than by a big VFX house (which was the route Daniels took on Swiss Army Man). Along with Zak Stoltz, other friends and collaborators included Ethan Feldbau, Jeff Desom, Ben Brewer, Evan Halleck, Kirsten Lepore, and Matthew Wauhkonen. Armed with After Effects and a variety of overlapping but differing skill sets, this small team completed what Zak calculates to be 80 percent of the shots themselves.

As the assistants explain, Zak was on set during production and made an initial list of potential VFX shots (there was no pre-vis). During the edit, Paul and the Daniels flagged any additional shots for VFX. Aashish and Zak worked on creating an editorial-to-VFX workflow in which Aashish would re-link to the raw footage in Premiere Pro and turn those shots over to the artists in the form of prepped After Effects project files. The team used Resilio Sync to share assets.

Given the VFX load and small size of the team, they adopted a philosophy that has served many productions well: CBB (Could Be Better). Rather than tweaking each shot until it was “perfect,” they got every shot to the point where it could go into the movie in its current state. Then, if they had extra time, they could go back and fine tune.

Because the team was working remotely instead of under one roof as they’d planned, they needed an easy way to share accurate and timely feedback. That’s where Frame.io came in.

Paul explains, “We used Frame.io to post our cuts and do our screenings. We would all get on Zoom. I’d send out a Frame.io link, and then we would say “three, two, one,” and everybody hits play, and I could watch everybody’s face. It’s such an important part of it, which is nice actually about the Zoom screenings. When you’re in a room for a screening, it’s awkward if you’re sitting directly in front of someone and staring at them, but that’s what you get to do on a Zoom screening. And if you like someone’s reactions, you fill the screen and you just watch them.”

During the Zoom screenings the viewers would give Paul verbal notes. “But what Frame.io allowed us to do was to sit and watch a scene over and over and over again, frame by frame, draw an idea, draw a thought, then leave me a well-thought-out note or idea versus the pressure that’s in the room.”

Paul made good use of the Frame.io integration with Premiere Pro. “They could give 70 notes or ideas, and then I could import them directly into my timeline,” he says. “I think our first cut was two hours and fifty minutes and I think we got it down to two hours and ten minutes, which was a lot.”

“Even if they are not consciously doing that, they might be pulling their punches a little bit and occasionally it was nice to let them go away, watch something on Frame.io and come back and be like, ‘You know what? We thought it worked in the room, but we were caught up in the moment. Can we look at that moment on its own? I’ve replayed it 50 times and we’ve got to keep working on it.’”

“That’s really valuable because we’re all human beings, and sometimes it’s late at night or early in the morning, you haven’t slept and you need time to just sit with it.”

Paul is an ardent Premiere Pro user, and he credits its flexibility as an essential part of being able to try all the many unconventional techniques this movie incorporates. As he described it during Adobe’s NAB session, “People talk a lot about the democratization of filmmaking, and that’s been kind of a catchword for a long time. We can bring these kinds of strange ideas and sensibilities and bring in people who know nothing about the rules of editing—what you’re supposed to do, how you’re supposed to use a project file or an audio file—and we can just let them break it.”

“We can let them do something that has never been done before. And Premiere can keep up. Art is messy. Creativity is messy.”

But messy can also be beautiful. As this team proved.

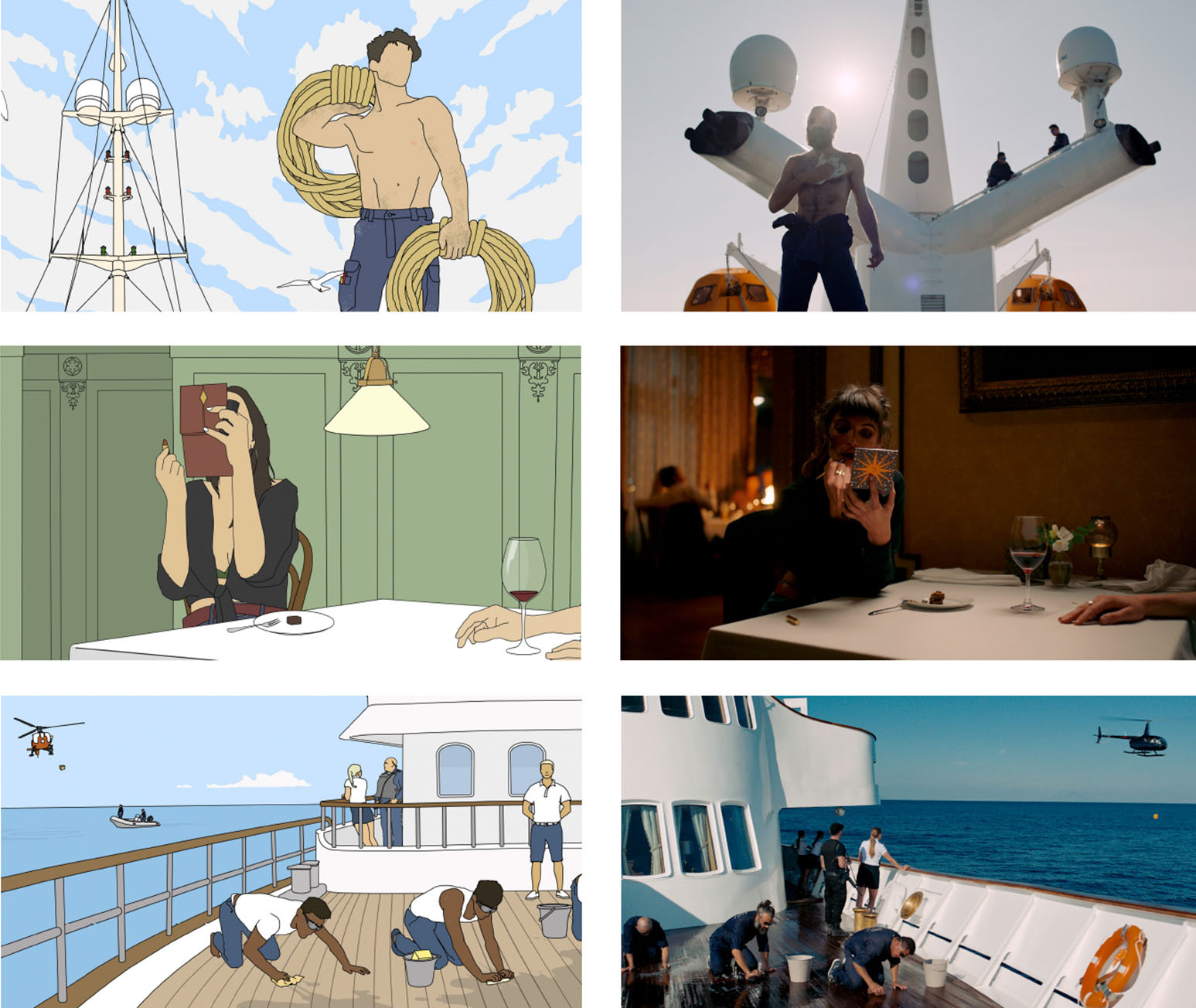

Triangle of Sadness

Only an elite group of directors have twice been awarded the Cannes Film Festival’s Palme D’Or. Among the nine who can claim that honor are Frances Ford Coppola, Ken Loach, Billie August, and now Ruben Östlund.

The followup to The Square (2017), Triangle of Sadness won him inclusion in that group. Along with Force Majeure the three films comprise his “anti-capitalist trilogy.” Yes, Triangle of Sadness features a Monty Python-inspired 18-minute emetic extravaganza and a bravado performance by Woody Harrelson as an alcoholic Marxist captain, but it’s social satire at its sharpest.

With a reported $15.6 million budget, Östlund drew his cast from eight countries and spent 72 days during peak pandemic in principal photography. Sweden, as you might recall, declined to lock down during COVID, so while many people in the rest of the world were spending their days in pajamas, Triangle of Sadness was shooting—albeit in three separate chunks from mid-February to late March in Sweden, late June to early July in Sweden, and mid-September to mid-November in Greece.

Although one of the locations was the former Onassis-owned yacht, the Christina O, the team spent nearly two years designing the set that would serve as the centerpiece of the movie. The dining room was faithfully recreated with a twist—it was set on an elaborately constructed gimbal that moved cast and crew alike. Production designer Josefin Åsberg also created a corridor and the command bridge. And yes, some of the team experienced sea sickness during the shoot.

In some ways, the challenges Östlund faced were not unlike those of the filmmakers on the Avatar and Top Gun sequels. When you introduce variables into the process, you don’t quite know what you’ll get. But like James Cameron or Joseph Kosinski he powered through, often pushing his actors to do 20 takes.

Cinematographer Fredrik Wenzel’s team wanted to “build a crescendo including exploding toilets, flooded staterooms, and people sliding in vomit along corridors.” Achieving the realistic look of the actors spewing took a team of special effects experts, who created a tube apparatus with a special mouthpiece. Östlund says that during pre-production they did a lot of “scientific research” to determine the direction the vomit would flow and how much would come out of different parts of the mouth.

Åsberg describes having detailed conversations with Östlund about the consistency of the vomit—which included chunks of octopus for one passenger and bits of shrimp for another—as a redux of their elaborate gourmet dinners. They also discussed the color palette for the raw sewage sloshing out of the toilets.

The mouthpieces were small enough to allow the actors to speak while an off-screen technician would press a button to an air pressure machine that would release the vomit at the right moment. Finally, they digitally removed the tube rigs from the actors’ mouths in post. And that’s after editor Mikel Cee Karlsson and Östlund spent six of the 22 months of post-production editing that one sequence, which obviously was time well spent—during one of the test screenings an audience member actually threw up. But Östlund doesn’t want us to only talk about the vomit. There’s so much more to this film to discuss.

Like, for example, the gorgeous cinematography and color grading that enhance the beauty of the locations and sets. Speaking to Zeiss, Wenzel says that he chose 29mm lenses for the ARRI ALEXA Mini LFs, capturing in widescreen using a large sensor because Östlund likes to have the freedom to manipulate the images by stitching takes together or moving props in the frame—which is part of why he spends so much time in post-production. It’s a technique that was also used extensively, and to great effect, in Parasite, another satirical look at the class struggle.

Wenzel, who collaborated with Östlund on the previous two films in the trilogy, is well acquainted with what his director likes—and doesn’t like—including lenses with “too much character” that might distract from the action. It’s why he also took great pains to work with colorist Oskar Larsson to develop a single LUT that they applied to all the footage, giving his director as consistent a look as possible especially because he would be looking at the footage in post for a long time.

Larsson’s approach was to modify the Alexa Rec 709 in terms of contrast and color temperature. He tells Zeiss that they only “added a bit of coolness to the shadows.” He also began working on developing the final look for the film, creating what they termed the “digital negative,” as early as during the dailies process. “I tried to apply what I had learned, creating a toolbox of grain, texture, film print curves, and LUTs and showed them to Fredrik,” he said.

“He liked a few of them and quickly discarded the others, often because it was simply too much. He was very allergic to texture, clarity tools, and things like acutance, which you can see on some film stocks, but which, when brought into the digital world, quickly become artificial. It was all about finding that balance.”

He was very allergic to texture, clarity tools, and things like acutance.

Wenzel supervised the visual effects, collaborating with post supervisor and co-founder of post house Tint, Vincent Larsson, and told Post Perspective that in addition to directing and writing, Östlund is hands-on with VFX. Wenzel explained that the planned VFX, such as bluescreens, explosions, and 3D assets, went to Copenhagen Visual where they worked with VFX supervisor Peter Hjorth, to complete 129 shots. The “offline” effects, which evolved throughout the edit, were done in-house at Plattform (the company co-owned by Östlund and Karlsson) by Ludwig Kallén, or by Östlund himself.

The painstaking work paid off. Not only did Triangle of Sadness earn the Palme d’Or and three Oscar nominations, it also did well at the box office, earning $24 million worldwide.

Isn’t it just a little ironic that a movie focusing on the failures of capitalism should so successfully resonate with critics and audiences—in the best possible way?

The darker dramas

Whether it’s violence imposed on a country, a community, or an individual, the three films in this category take us into the experience of oppressors and oppressed. From the trenches of WWI to the rarified air of classical music to a pastoral community whose beauty hides dark secrets, the teams behind these three films drew us into worlds that are deeply unsettling.

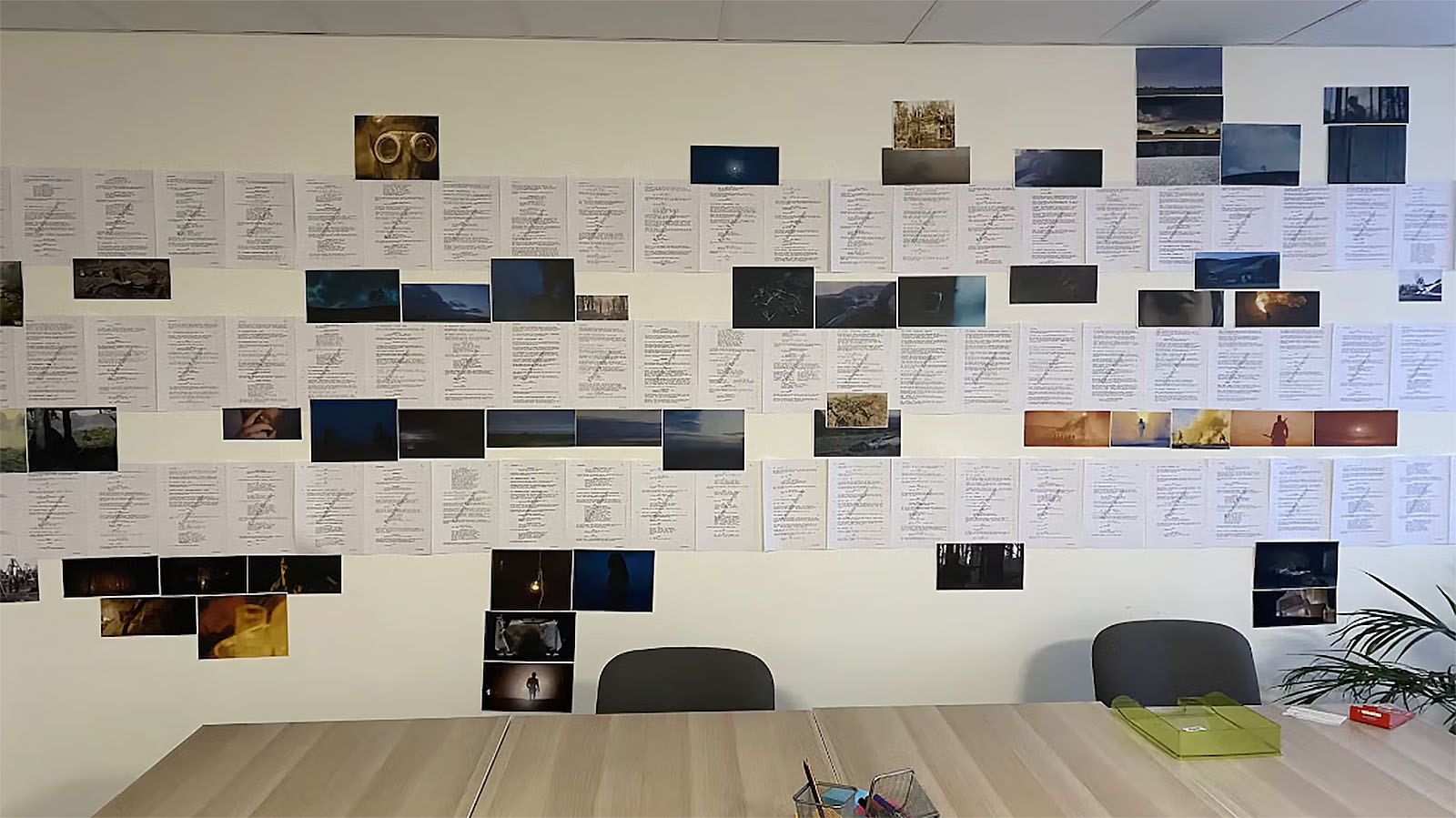

All Quiet on the Western Front

Making a war movie is daunting at best. Making a war movie that accurately represents the hell that is trench warfare compounds the level of difficulty. Releasing it at a time when there is actual trench warfare ongoing in Ukraine—100 years after World War I when Erich Maria Remarque’s novel was originally set—is eerily prescient and essential viewing.

Netflix’s 2022 reimagining of All Quiet on the Western Front, with nine Oscar nominations (and four wins) along with seven BAFTA wins, is also a considerable feat of filmmaking. According to an article published in Die Welt, director Edward Berger’s budget of an estimated $20 million looks at least twice that onscreen. From the elaborate trench sets and the meticulous costuming and makeup to the invisibly realistic visual effects, it’s an uncomfortably immersive experience for the viewer in all the right ways.

But first, let’s look at one of the more remarkable backstories surrounding this movie. Of the many BAFTAs the film received, the one for Best Adapted Screenplay has perhaps captured the hearts of industry insiders the most. Originally co-written by Scottish triathlete Lesley Paterson and journalist Ian Stokell, it took 16 years for their script to come to life.

Paterson’s familiarity with endurance and adversity as an athlete helped her succeed in her filmmaking career—because every film is a sort of marathon. Although Paterson’s acceptance speech was cut from the BAFTA broadcast, her message of tenacity and gratitude resonates with the many unsung heroes of film production whose hard work often goes unrecognized by awards but whose passion drives them to do the thing they love most.

When director Edward Berger read the script that Paterson and Stokell had written, he decided that instead of remaking the 1930 American film, it should hew to its original German roots. He took the screenplay in that direction, returning to the source material and adding to it with his own research. As a German, he felt responsible for sharing this story in a way that would reveal the war in all its brutality.

Not unlike Top Gun: Maverick, Berger had a military adviser create a boot camp for the young actors. They learned how to run through the trenches and across the fields, shoot rifles, throw grenades, and get comfortable in the very realistic uniforms and gear. Like the young soldiers they play, the actors started off enthusiastically but soon came to realize the experience of what they were portraying was brutal and difficult in itself. According to Berger, the shoot (55 days of principal photography outside of and around Prague) was cold, muddy, and miserable—which both the actors and crew endured.

Working with Oscar-winning cinematographer James Friend, ASC, BSC (who also won the BAFTA and BSC awards) they chose to shoot with the Alexa 65. Friend says, “We wanted the audience to feel like they were with the characters in the trenches, which informed what cameras and lenses we shot on and how we would approach the visual storytelling. It’s an IMAX-certified camera, so it’s got the digital sense of size of the 65mm gauge of film.”

We found it very appealing to put those large-format cameras in tight spaces, like trenches.

“That kind of immersiveness really worked. Our other camera was the Alexa Mini LF, which also has a very large sense of size. People always associate that sort of photography with landscape photography, but we found it very appealing to put those large-format cameras in tight spaces, like trenches and smaller rooms. I found it a more pleasing use of the format.”

Which is not to say that they didn’t have larger landscapes to cover, as well. Oscar-winning production designer Christian Goldbeck’s battlefield location was the size of two soccer pitches so the actors would have room to run without requiring cutting. The trenches, built only slightly larger than they would have been in reality to accommodate the cameras, equaled the length of ten football fields.

The location Goldbeck chose was a former airport from the Second World War in Milovice, in the Czech Republic, and they spent two months creating a detailed plan. “In order to determine exactly how many meters actually needed in order to do justice to the plot and action, we measured it using a stopwatch. What does the maze of trenches look like? It was like a puzzle that we had been trying to put together at the drawing board, circling it scene by scene to find out what was absolutely essential,” he says.

“From this, we developed the floor plan for the trenches. In addition, the aim was to design the distance and topography of the battlefield so that James had the best conditions for his camera work.”

The team began digging in January 2021, with filming taking place from February through April. Before principal photography began, Friend also captured footage of a Czech forest, which would be intercut as a break from the extreme violence throughout the movie.

Although the cold and mud that the actors experienced were real, Heike Merker’s hair and makeup team enhanced their apparent suffering by applying quite realistic makeup and splints on their teeth. The closeups are especially haunting as we see the effects of war on the characters’ faces, from fresh-faced students to weary soldiers who have experienced extreme conditions, deep hunger, and exposure to mustard gas.

And then there’s the editing and score, which were intricately intertwined to propel the characters through their ordeal. Berger chose previous collaborators Sven Budelmann (editor) and BAFTA and Oscar-winning composer Volker Bertelmann, who brilliantly created a visual and sonic experience unique to the war film genre. As Budelman explained in The Rough Cut podcast, he and Berger began in the edit suite by experimenting with individual snare drum hits, to which Budelmann added the sound of a door closing.

The effect was akin to a gunshot, which created a percussive transition. Berger also had many hours of music cues that Bertelmann had created for a previous project they’d collaborated on, which Budelmann used in the initial cut of the film.

They then sent that back to Bertelmann with Berger’s instructions to make something unique—and when Berger and Budelmann got Bertelmann’s score back with the menacing three-chord motif, they were “knocked out of their shoes.”

Berger and Bertelmann were clear that the score should not manipulate the audience’s emotions, but rather work in service of the protagonist’s mindset, sticking as closely to his story and highlighting the desperation and futility. Bertelmann was also conscientious about where he placed the music, always mindful of the musical purpose in any given scene—and then there was the choice to roll the final credits without music at all, letting the audience experience the intensity of their feelings without any kind of coaching.

Budelmann typically likes to be on set with the production but due to COVID restrictions he instead worked from Berlin while the team was on location. Budelmann would send his cuts as QuickTimes to Berger, who would review them during his commute from the set each day. They started the fine cut in June 2021, and although they hadn’t been able to get the vaccine yet they were very careful to only see their wives, children, and each other during that period.

According to an article in Post, Budelmann’s setup is a Mac Studio with the newest AVID Media Composer version installed, a classic three-monitor setup, and a three-speaker LCR set up to work in Dolby ProLogic. The media is stored on a Promise RAID. They also set up a remote system, which mirrors either the desktop monitors or the output from the AVID DNxID box.

He estimates that they had approximately two to three hours of footage per shoot day—although for some of the larger battle scenes with more coverage it could be as much as five or six hours. Remarkably, his first cut was only five minutes longer than the final cut.

Budelmann (like Top Gun: Maverick editor Eddie Hamilton) never attended film school. Instead, he edited music videos and commercials—many of which he collaborated on with Berger. He says that cutting commercials, with their typically high shooting ratio, helped him learn how to quickly get through large volumes of material and find the best bits.

His music video chops are on full display as he elegantly cuts so many scenes that have no dialogue but are filled with sound effects and the austere score. He claims to have approached this movie with a more documentary style, using the juxtaposition of nature shots with the “destructive” score to highlight the idea that even with the violence that humans commit, the natural world somehow continues.

Keeping the documentary aesthetic in mind, the filmmakers also sought to make the VFX as authentic as possible. Visual effects supervisor Frank Petzold, who has worked on numerous CG-laden productions (The Golden Compass, Hollow Man, The Ring), sought to do as much practically and photographically as they could. In an interview with AWN, Petzold describes their process.

They began by storyboarding everything in pre-production, and Petzold collaborated closely with Friend in the planning of camera movement and lens choice. He also worked with the special effects team to see how much they could achieve with real explosions, choosing only to augment them with CG rather than creating them entirely synthetically. The team was also sensitive to balancing how the wounds on the victims were depicted so as not to make it so gory that it’s either unwatchable or it numbs the audience to the violence.

Working with UPP in Prague, the film contains about 500 VFX shots. According to Petzold, the opening sequence is almost four minutes long with only two cuts, which required extensive rotoscoping that was shared between UPP and Cine Chromatix in Berlin. Although it was laborious, the result is a film in which the practicals and the CG are beautifully and seamlessly integrated.

If at the end of All Quiet on the Western Front there are no heroes and there is no glory, what the filmmakers have accomplished is itself heroic given the budget, filming conditions, COVID challenges, and emotional nature of the story. Taking on the kinds of films that are deeply difficult to watch reminds us that art can serve many purposes—and if one of them is to cast a light on our worst moments as humans, maybe it can help us be better.

TÁR

Unfortunately, the team behind TÁR did not participate in this article—and unlike most of the nominated movies, the technical details about the production and post-production process are a little sparse. But we gleaned what’s publicly available with the hope that maybe we’ve saved you a little Googling.

In a clear turnabout from Women Talking, in which the women are the victims of the male predators, TÁR presents the woman at the center of the film as a capable predator who is emboldened by her power. Cate Blanchett plays the role as both protagonist and antagonist, orchestrating her own fall from grace by the hubris of her actions.

But, like Women Talking, women are key to the making of the film. In addition to Cate Blanchett’s multi-award winning performance, Monika Willi was Oscar-nominated for her editing, and Icelandic composer Hildur Guðnadóttir (nominated for her score for Women Talking) wrote this score, as well. Did director Todd Field select Willi for her work with Michael Haneke on 2001’s The Piano Teacher, a story of a pianist whose desires and misuse of her power lead to her downfall?

From the movie’s opening, which contains the full cast and crew credits (not even set atop an establishing sequence or motion graphics) you already know that TÁR is a one-of-a-kind of movie.

For example, cinematographer Florian Hofffmeister, BSC (also Oscar-nominated for his work) discusses how he and director Todd Field spent weeks doing camera tests to capture something that’s at once classically cinematic but also…different. In that interview he recounts how he worked with ARRI in Berlin to detune the glass to alter the optics of their Signature Primes for use on the ARRI ALEXA 65.

They also developed a digital film emulsion system to mimic the grain and color science of celluloid in camera. “ARRI did extensive tests [during the transition from analog to digital exhibition] to create a digital template so that they could be sure that a film graded in the digital suite and then created as a film-out on the ARRILASER would match [in both celluloid and digital presentations],” Hoffmeister said.

TÁR principal photography was 65 days, with most of the shoot on location in Berlin, as well as Dresden, New York City and Staten Island, and Southeast Asia.

If the editing has been described as “economical” it’s perhaps because Field, Hoffmeister, and Willi allow extended scenes to play out, as in the interview with Adam Gopnik early in the film. But Willi describes the “set piece” of the film as the 15-minute scene (shot as a oner) in which Tár leads a conducting class at Juilliard. That scene is then recut as an unflattering piece that’s intended to post on social media—what Willi calls a “hatchet job.”

According to IMDb Pro, principal photography wrapped on December 11, 2021 and post-production commenced in January 2022. Because there was a resurgence in COVID, rather than cutting in Vienna as they originally intended, they ended up isolating in a 15th century nunnery outside of Edinburgh, Scotland.

Unsurprisingly, Willi felt it helped her zero in on addressing the difficulties of sustaining the rhythms and structure of Field’s script. Working together seven days a week for a very intense first three months to get through the first cut, she describes him as a director who is in the cutting room from morning through evening. Field also brought Willi together with the sound designers during the editing process and wanted to have elements such as sound and visual effects already in the room so there would be no surprises later.

The original rough cut was almost three-and-a-half hours long, and they spent the rest of post-production (which ended in August 2022) “getting rid of everything that was unnecessary or that was not important for the ride that Lydia Tár was taking,” focusing on “when to enter and when to leave a shot, which was a rhythmical decision that gets tighter as the film progresses.” The final running time is a far leaner 158 minutes.

The entire editing team, including editorial assistants and the VFX editor were also there in the nunnery. Field says that it was quiet enough to do sound and Foley work there, and they even had actors come in to do ADR. They sent that off to London-based sound designer Stephen Griffiths during the first part of post, so by the time they went to Abbey Road for the first temp mix they were “in good shape.” There were also 300 VFX shots mostly consisting of cleanup and compositing—with the exception of an interior car set that they shot on an LED stage in Berlin. The VFX companies involved included Residence, Framestore, Alchemy 24, and Syndicate.

Company 3 colorist Tim Masick handled the final grading, resulting in what Phil Rhodes describes as cinematography that is unshowy and spectacular.

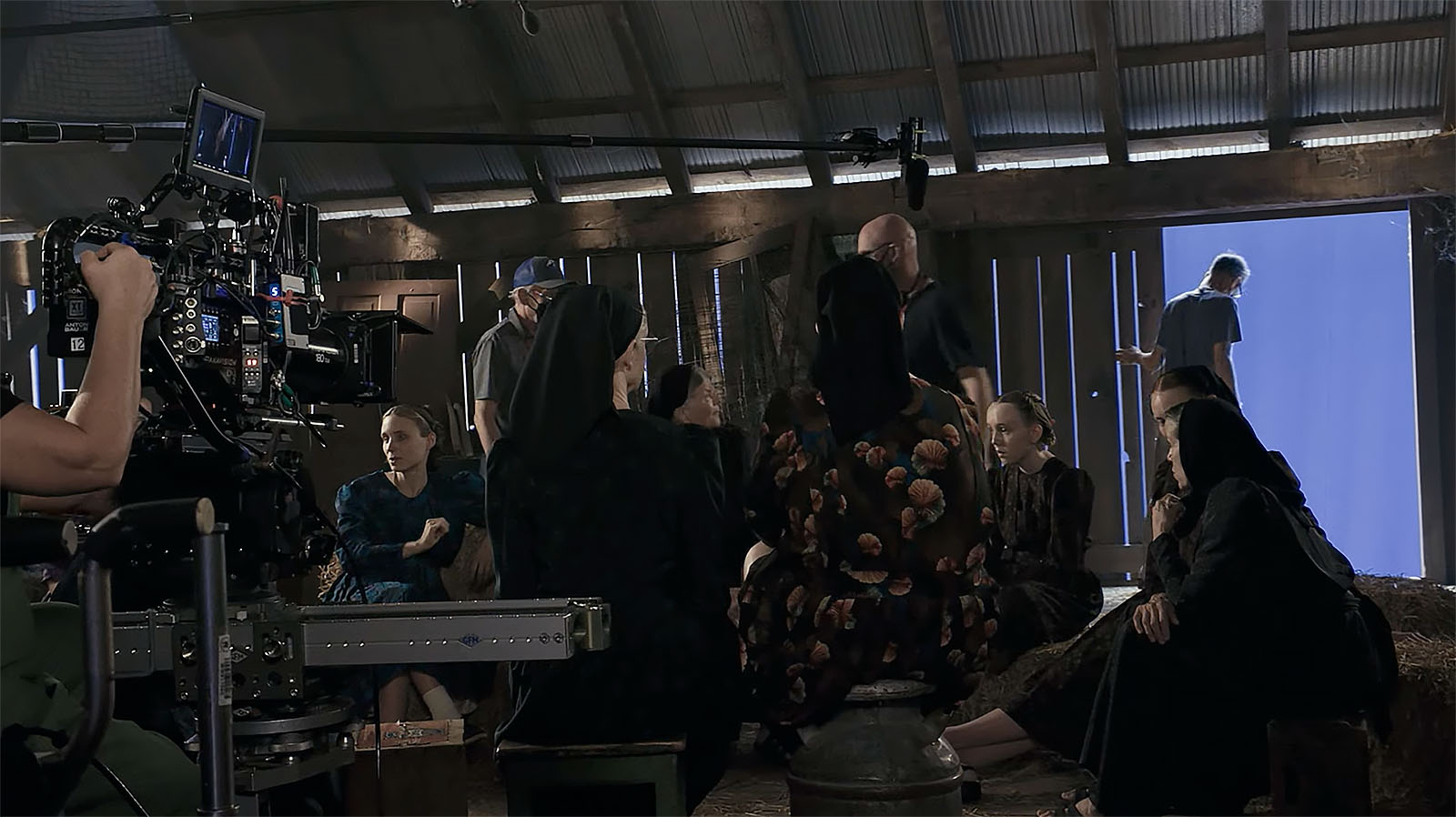

Women Talking

In this film, the women aren’t just talking. They’re writing, directing, producing, editing, composing, acting, and more. They’re finding new ways to structure a production, acknowledging that filmmakers have responsibilities beyond the set that include caring for families or for themselves.

Based on Miriam Toews’ book, Oscar winning writer-director Sarah Polley (for Best Adapted Screenplay) has taken a story inspired by true events and made it into a rich big-screen experience. Editor Roslyn Kalloo’s first assistant and VFX editor, Craig Scorgie, generously gave us some insights into what went into this sweeping production. Let’s just say that it took a close-knit village.

Polley first became widely known for her work in Terry Gilliam’s The Adventures of Baron Munchausen as a child actor (and has spoken out about her experience on that film). Since then, she’s crafted a career as a writer-director, gaining worldwide critical acclaim along the way. But more than that, her experience with Gilliam has informed how she conducts her productions—many of her cast and crew credit her as one of the most collaborative, sensitive, and reasonable directors they’ve worked with.