If there’s anything we’ve learned about our readers over the years, it’s that you love content about all things color related. So we got inspired: what if we started a series of live demonstrations covering all the major color grading applications that have integrations with Frame.io?

Last month we launched the first in our series of Frame.io Fundamentals live events, beginning with Lumetri, Premiere Pro’s powerful color grading tool. We were thrilled to see so many of you attend, and excited by your engagement with us and the great questions you asked. You can watch the full session below, but we thought that for those of you who learn better by reading, we’d provide a summary of the session that highlights the key points we covered.

And by we, I mean our host Shawn McDaniel and me, the in-house colorist at Frame.io. I’ve been privileged to work with some of the world’s best color scientists on some amazing projects throughout my career (like remastering some of the Marvel titles to HDR), and I have a passion for both the creative and technical side of the finishing process.

Fundamentals of additive color theory

If you’d like to watch as you read, we begin this section of the discussion at approximately ten minutes into the video.

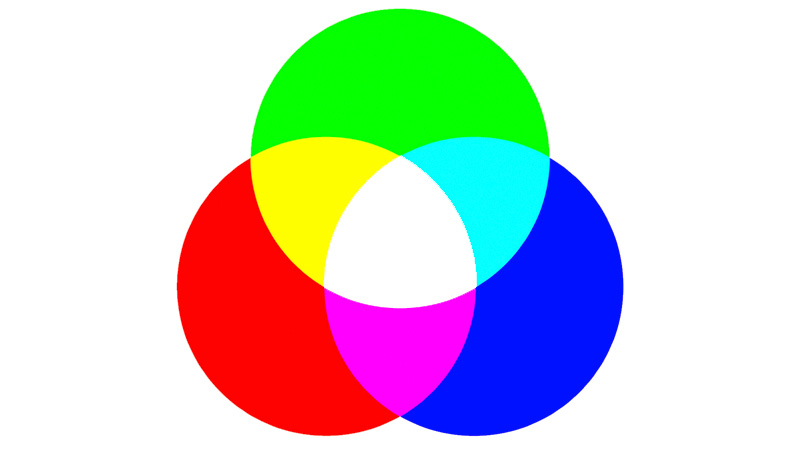

Additive color theory is based on how the human eye perceives the visible spectrum of light. The eye has three receptor cones that are, respectively, sensitive to wavelengths of visible light in the red, green, and blue regions—the three primary colors. It’s called additive color theory because if you add varying amounts of the primary colors, you can achieve all perceivable colors.

Mixing them together at full intensity yields white light; removing them equally yields black (the absence of color); and mixing the primary colors in various combinations yields the secondary colors—yellow, magenta, and cyan. The difference between black and white is known as your luminance range (also known as contrast ratio or dynamic range).

[At 12:10] If you look at these familiar color wheels, you can see the red, green, and blue colors, but you also see the secondaries and all the ranges in between. The center is white (because it’s the most neutral part) and as you move away from the center you’re introducing more saturation in the direction that you’re moving your corrector.

The color harmonies wheels show the relationship between the colors. If you look at the colors that are next to each other on the first wheel, they are said to be analogous colors that are harmonious because there is a natural falloff from one hue to the next.

The colors that are opposite each other on the color wheel are said to be the complementary colors (also called contrasting colors) because when you put them next to each other in a scene they complement each other to make the colors pop—which is how we create color contrast in a scene. The next one is the monochromatic wheel, in which you stay in a single hue but change the intensity of it.

Hue refers to different colors or different shades of colors, saturation refers to the intensity of the color and brightness refers to the luminance of an image. If you remove the chroma (or color) of an image, you’re left with the luminance information, as you can see on the grayscale chart.

Display technologies

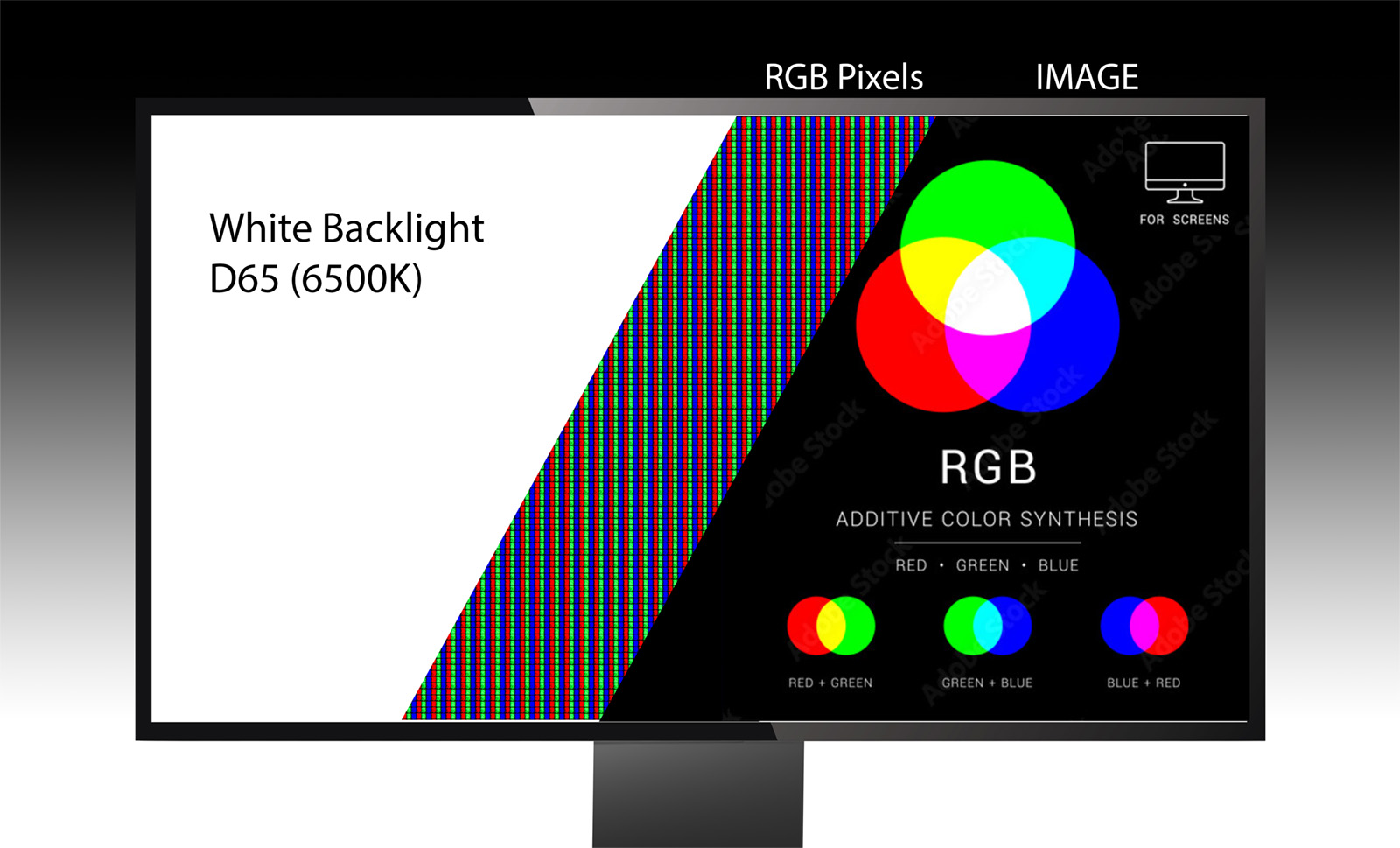

[14:10] We next covered display technologies, using an LCD monitor. You can think of an LCD monitor as a kind of “glass sandwich” that consists of numerous layers: a backlight that emits a white light calibrated at 6500 degrees Kelvin, plus layers that contain liquid crystal material, polarizing filters, and tiny transistors that tell the pixels to what degree they should be turned on or off.

On the monitor there is a direct relationship between your luminance and your chrominance or your saturation. If you’re increasing saturation you’re basically intensifying those RGB pixels and less backlight can get through. Similarly, when you’re increasing the luminance channel you lose some saturation because more light gets through those pixels and dilutes those colors—so you want to be mindful when you’re making one adjustment to compensate for the other.

SDR and HDR

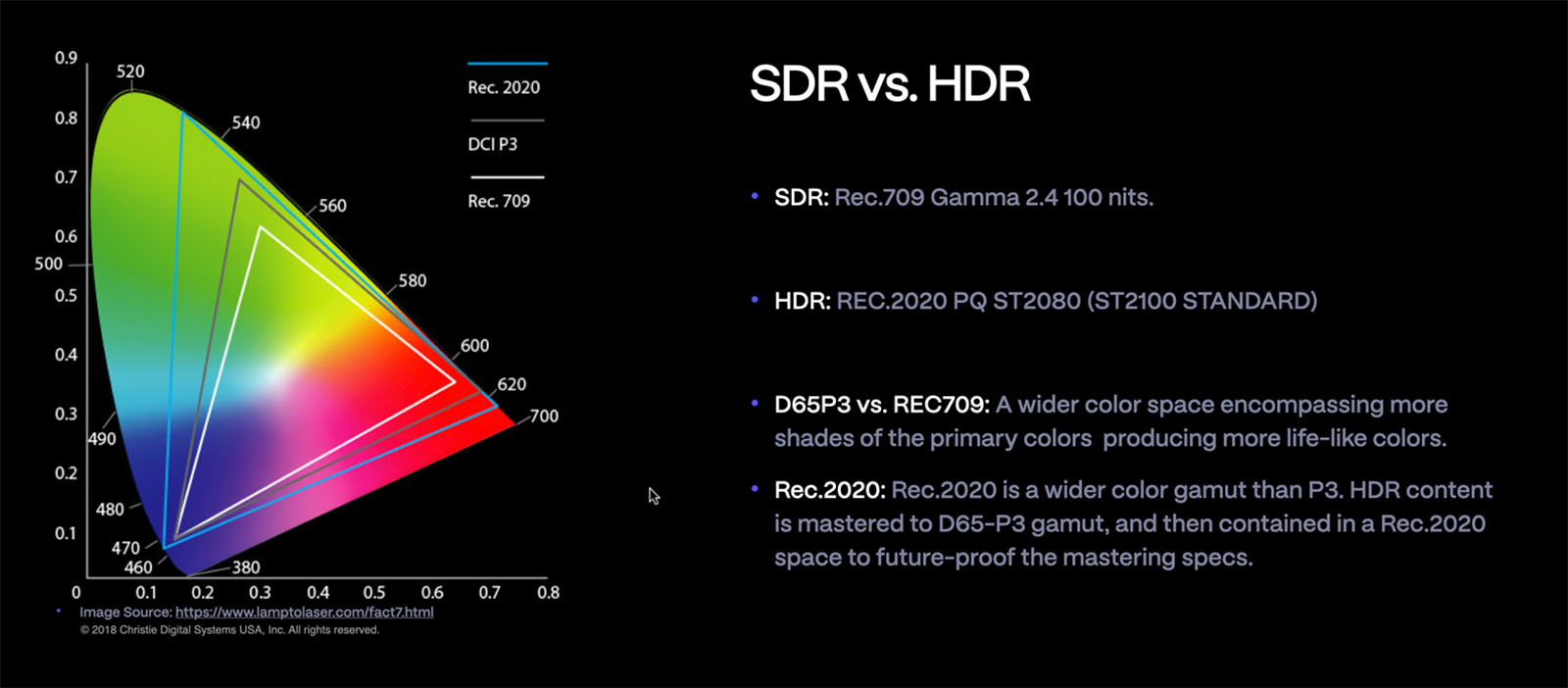

[15:45] What we do as colorists doesn’t really change in terms of the process whether we’re working in SDR (Standard Dynamic Range) or HDR (High Dynamic Range). HDR gives us a bigger palette or canvas in which to work with a wider range of perceivable colors and contrast.

In SDR, the content is mastered to Rec. 709 color gamut on a 100 nits (the measure of luminance on the screen) mastering display and is then encoded in Gamma 2.4. One of the fundamental issues with SDR is the lack of control over the white luminance on screen. For example, in the case where you have a lower third on your screen that’s white, that lower third has now become the brightest thing on the screen—because white is defined by the maximum luminance on that gamma-encoded content.

As we move from SDR to HDR, we are moving from the Rec.709 gamut to D65-P3 gamut and mastering that in a Rec. 2020 container—which is even larger than P3. This future proofs the HDR mastering standard for when monitors become fully capable of showing the Rec. 2020 gamut.

Additionally, HDR content is mastered in ST.2084 instead of Gamma 2.4 at 1000 nits of luminance (ST.2084 is also known as Perceptual Quantization or PQ for short). This combination allows for assigning code values to the luminance levels, which not only enables increased contrast and a larger color palette, but also provides control over how bright whites can be on screen. In HDR, with a higher dynamic range and D65-P3 color gamut, you’re able to create more depth in your image that gives you a more realistic look with more creative control.

Now, if you apply the same lower third in an HDR space, you’re actually able to assign a code value for how bright you want that lower third to be (say, 200 nits). That gets encoded into your final render so when the signal reaches a television that’s capable of displaying at 1000 nits it can read that value and knows how bright that white is supposed to be. Eventually we’ll reach the point where all displays are capable of showing Rec. 2020 and will grade all our content in the Rec.2020 gamut.

Beginning the grade

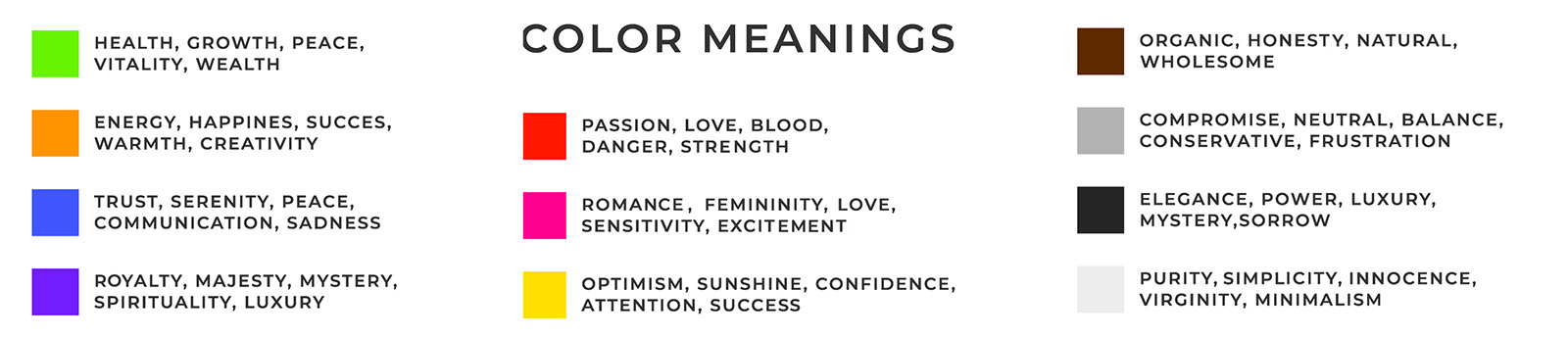

[20:23] Since we’re talking about the fundamentals of color, one of the topics that it seems isn’t addressed often enough is color psychology. We cover it briefly in this session, but I also encourage you to read more about it for yourselves because not only is it fascinating, it’s also essential knowledge for effectively capturing a director’s creative intent and conveying it to the viewer.

Colors can evoke different emotions in us. For example, if you want to convey a sense of tranquility, you wouldn’t choose the color red, which is associated with danger. Instead, you might choose shades of blue, which suggest water.

[22:36] The point is that color grading is an extension of cinematography, and the colorist is responsible for understanding the story the director and cinematographer have attempted to capture. Every video, whether it’s a feature film or a corporate video, has a story or a feeling that needs to be conveyed, and that’s when I apply my knowledge of color psychology to enhance the creative intent.

When I start grading a project, I begin by looking at the offline dailies. A basic color correction will have been applied to them and that’s what the team has been working with throughout the edit. I ask for the LUT they used, and pay attention to the production design and lighting. I talk to the filmmakers and ask questions to better understand what they’re hoping to achieve.

Talk to the filmmakers and ask questions to better understand what they’re hoping to achieve.

Maybe the story is about someone who’s running away from darkness and at the top of the mountain is freedom. I might want to have two color palettes—one for the darkness, which has cooler undertones—and then one for when they reach freedom. I adjust my overall corrections to adhere to the story and then suddenly, even though we started with the same LUT as the production, we’re already at a much better place.

I start my process that way and also have some other LUTs and presets in my toolbox, and I encourage you to save different presets and different looks that you’re creating. It’s also good to make friends with color scientists and other experts and colorists in the industry.

Once you dial in that initial look, you can audition it with the filmmaker and gauge their reaction to see if you’re headed in the right direction. There are, of course, cases where the filmmaker wants to change the look of what’s been captured for whatever reason—it may be that they shot in less than ideal conditions or maybe the story has evolved in a different direction. When that happens, it’s better to not give them a look that is too extreme initially, because that can lead to them losing trust in the process.

What’s a LUT?

[28:46] If you’ve come this far and want an explanation of what a LUT is, it stands for Look Up Table. We could do an entire session just on LUTs, but briefly:

Modern cameras that shoot in Log format are designed for the sensor to capture as much information as possible, but that capture format needs to be processed in order to be displayed correctly. So when you look at those Log files directly on your monitor, the video looks washed out.

A LUT is a translator of sorts. It takes the input value from the original camera file and transforms it to an output or display value. If you’re looking at Rec. 709 Gamma 2.4, an appropriate SDR display LUT for your type Log or Raw files converts your footage effectively from that Log format to something that your display is capable of showing so you’re monitoring it correctly.

Most camera manufacturers provide basic forms of LUTs for their Log formats that you can download as your starting point for viewing, which are called conversion LUTs. There are also creative LUTs that are designed to give you a particular look. For example, you can download a LUT that will give your film the look and feel of a Ridley Scott movie, or give you the very popular orange and teal look that’s used in so many movies and shows. Let’s just say that when you start getting into that territory, there are some very good LUTs—and some bad ones.

Lumetri demo

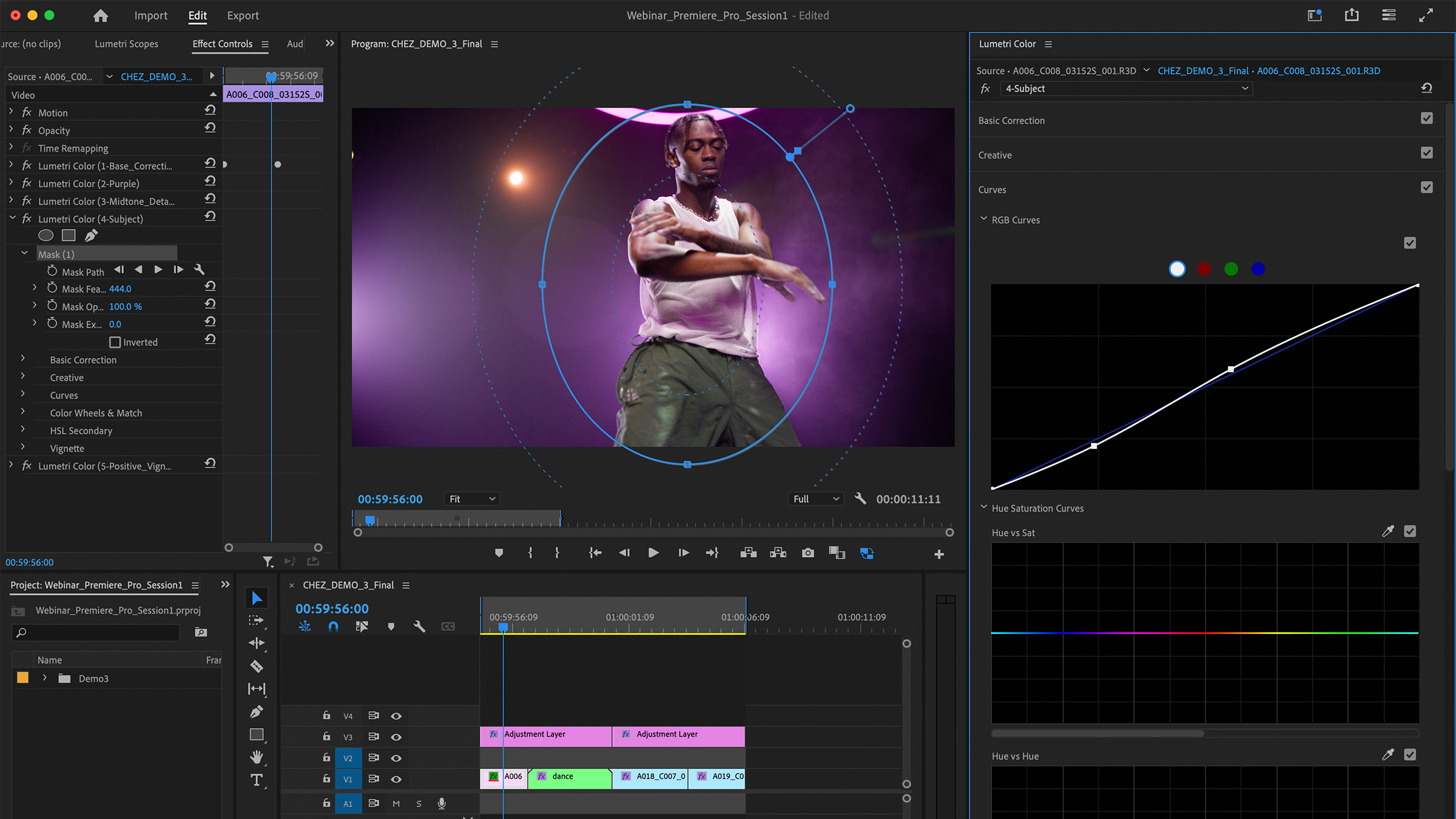

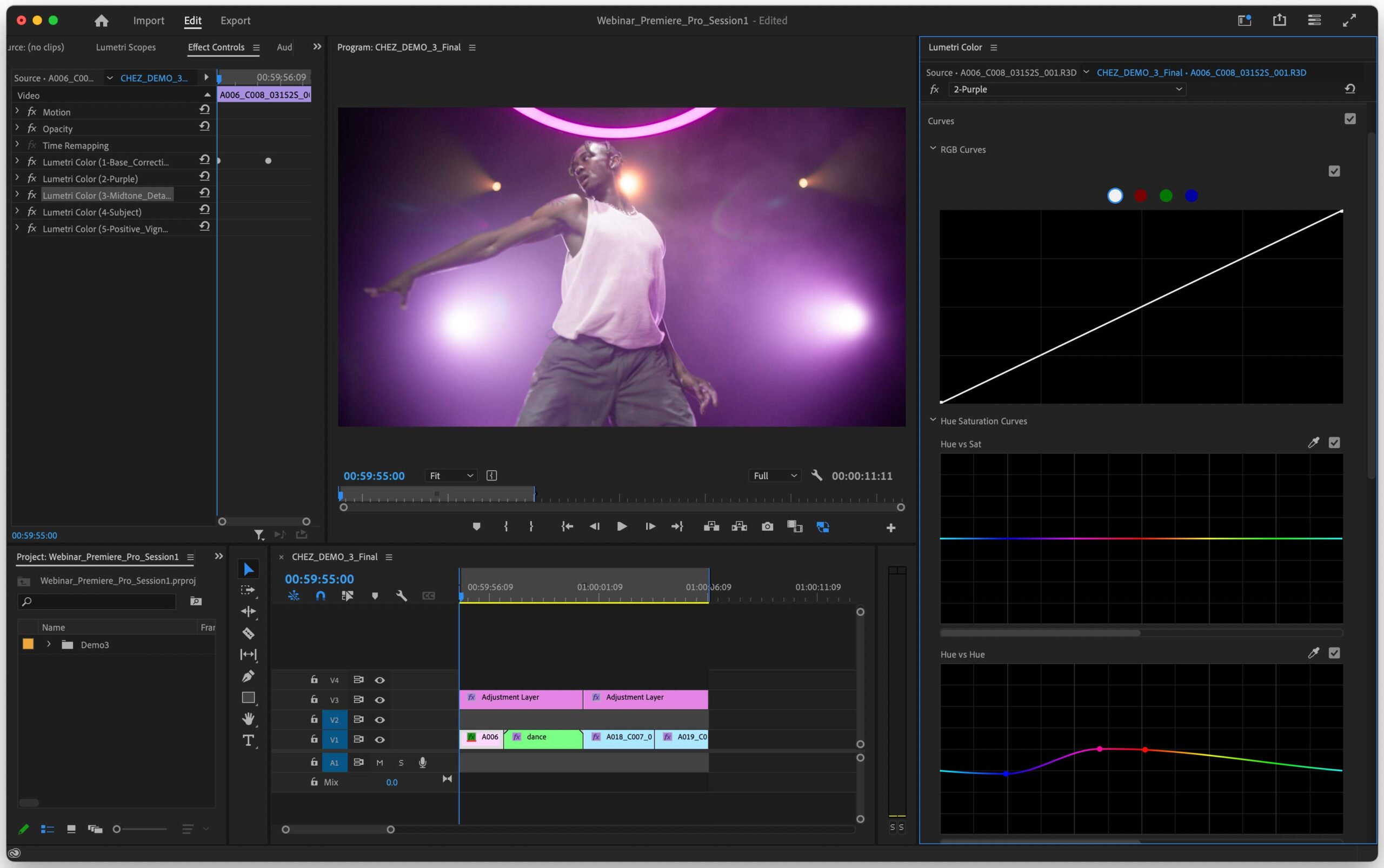

[32:05] Here we get into the demo of the Lumetri panel. First, I demonstrate how to access the panel and show you how I like to set up my workspace.

Some of you asked questions about what kind of monitoring is required, and if you’re serious about accurate color grading, you should invest in a broadcast-quality monitor. Adobe provides a list of recommended hardware here, but you can get an AJA or Blackmagic video card that will give you a correct broadcast signal out to your grading monitor, which is the one you rely on for judging your colors.

[35.15] If you’re on your GUI monitor (I was using my Mac in a p3 space but my broadcast monitor was set to Rec.709) there are differences between the two and you want to make sure that both monitors are in the same range. You can do this by going into the Premiere Pro settings and enabling Display Color Management, which adds a small tone mapping so I can see a comparable image on both monitors.

There’s a lot to discuss about monitor calibration—so much, in fact, that we’ve decided to host an upcoming session that covers monitors and scopes.

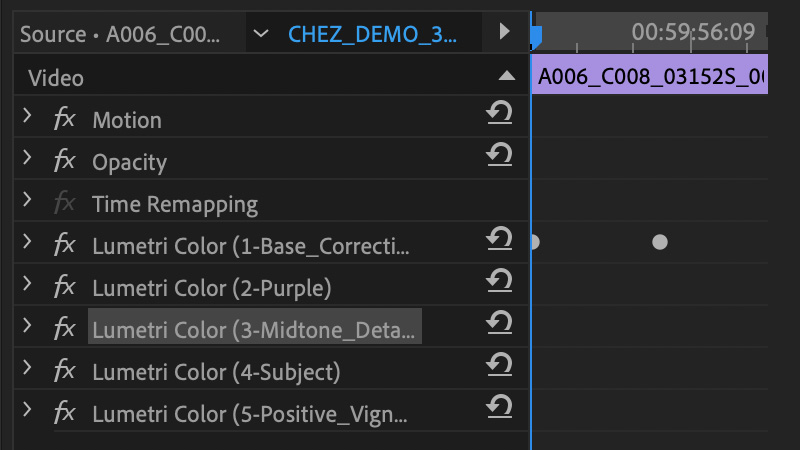

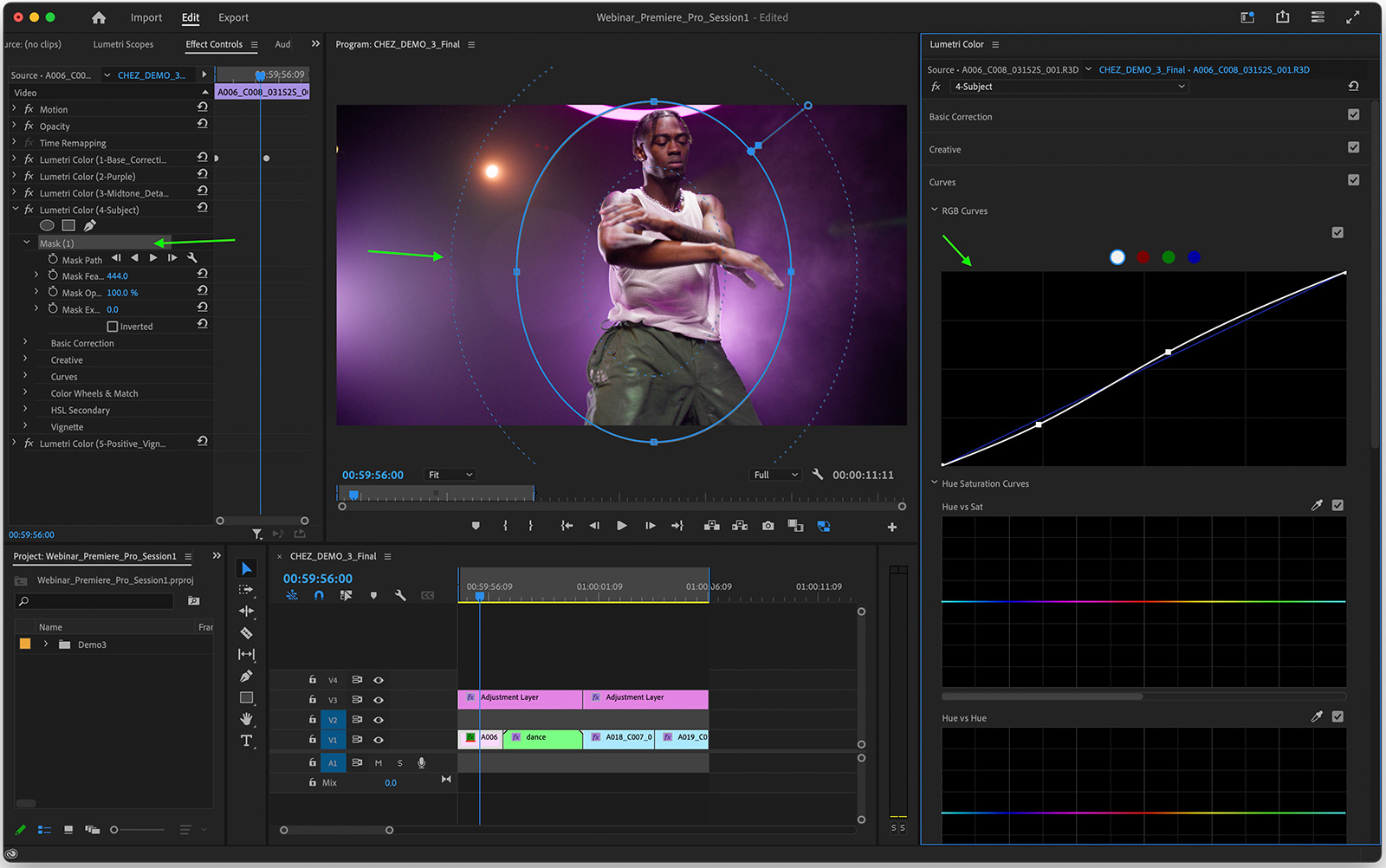

At 36:02 you can watch as I build adjustment layers and see how I keep my layers organized. I try to limit what I’m doing in each layer so I’m not doing too many corrections in one layer, and I label them so that when you have a more complex timeline and you’re copying and pasting grades you can easily know which layer is doing what.

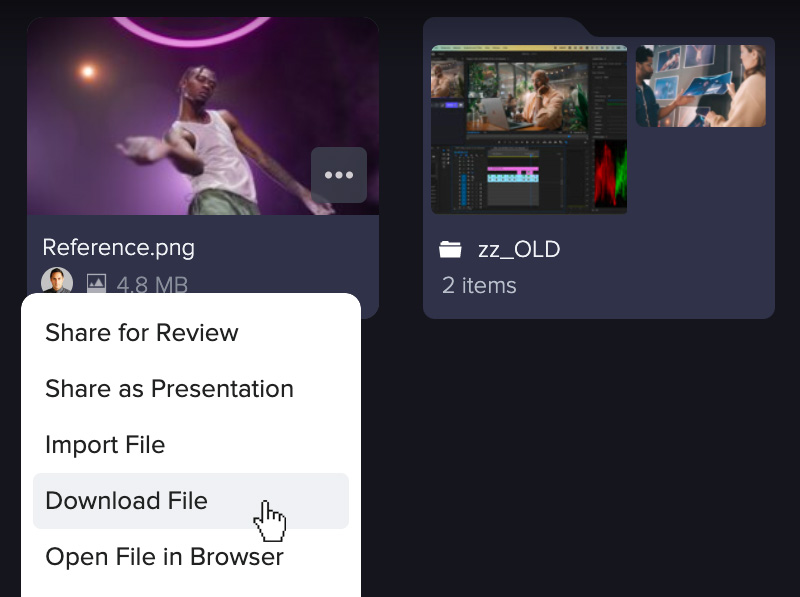

Beginning at 37:01 I demonstrate how I added LUTs, and then go on to do some basic adjustments for color temperature and exposure. At 39:33 you’ll see how I use the Frame.io panel to download a reference frame that shows how the director wants the background color changed, and how easy it is because I don’t even have to leave Premiere Pro to access it.

After that, I demonstrate how I compare the reference to the clip I’m color correcting in a side-by-side view. Note that I’m making my corrections on a LOG clip and then sending that corrected signal to my adjustment layer, which converts it to Rec. 709. This is called scene referred or input referred color correction.

Then, at 42:45 we discuss scopes. I like to use the RGB parade and my vectorscope, which breaks down the primary color channels and gives me a quick view of how to balance my image, while the vectorscope displays saturation. And at 44:14 you can see the line on the vectorscope that assists in balancing for Caucasian skin tones.

At 45:24 I show you how to achieve the director’s desired purple color by using curves. Another thing I like to be sure to do is to not just focus on a still frame, but to play the clip so I can see how the correction looks throughout. At 47:57 I show you how to work with curves in luminance and in the RGB channels.

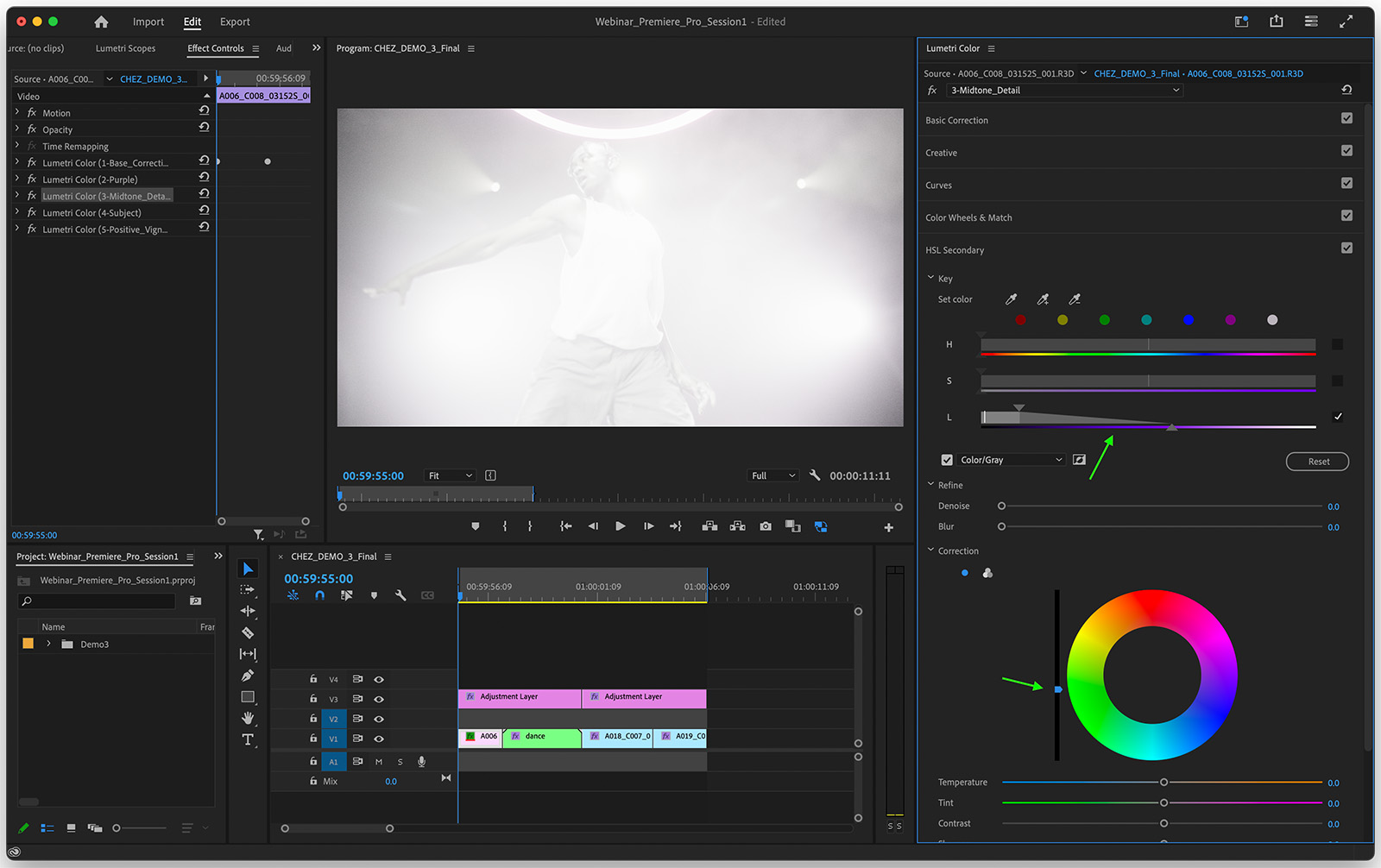

At 49:07 you’ll see how I add some mid-tone detail using the HSL (hue, saturation, luminance) secondaries with the eyedropper tool. Then, at 50:33 I use the luma channel corrector slider to add a little more contrast.

At 51:04 the vignetting is a little heavy so I’m able to take that down a bit using the vignette tab. And then, at 52:09 I show you how I copy the grades I’ve applied to my first clip and apply them to the second clip, which is quite similar. Finally, at 53:11 I have a new clip in a very different setup. The LUT they applied during editorial gives us a pleasing image, but the filmmakers wanted to bring more life into the image and create more contrast. So there I deconstruct the final look I’ve achieved and show you the steps I took to get there.

Q&A

At 57:05 we begin the Q&A part of the session. The first question was around the importance of having the right hardware. To recap, because it’s so important to be able to watch clips in motion, having hardware that supports watching the full-resolution clip as it’s going to be mastered is vital. Without that ability you’ll have to view a lower resolution image, which is less than ideal. That said, there are now many affordable systems that will be adequate for playing back high-quality, high-resolution video.

[58:19] The next question covered how to white balance in order to have the most control over your footage in post. The person asking the question has used auto-balance, which has yielded mixed results. It’s why, during the demo portion of this session, I covered the ways you can manually manipulate the image—because if you’re using auto settings, you end up having less control.

The rule of thumb is that you want to keep your lighting values as consistent as possible when shooting in similar conditions. But, there’s an eyedropper tool in Lumetri for white balance. If you have a reference in your footage of the white that you’ve derived from an auto balance and it looks right to you, you can simply grab the eyedropper and select it, which automatically then makes adjustments to your temperature and tint and gives you a good start toward a pleasing result.

[1:00:13] We had a few questions about monitors, wondering whether getting a monitor with pre-built color profiles such as an accurate Rec. 709 is worth it, or is calibrating a better option?

It’s an important question. So, one of the reasons why we use these expensive monitors and reference monitors is because we want to see the imperfections of the image. A consumer monitor has built-in features like noise reduction and if you have noisy footage and you’re judging it on a monitor that masks those artifacts, you think your image looks good and then you render that out and..it doesn’t. So you need a proper monitor that’s showing you all the imperfections.

There are professional calibrators that you can hire, and you can also buy different devices like a C6 probe and put it on your display to see if it’s properly calibrated.

If you don’t have a broadcast monitor and you need to rely on a computer monitor I think the most important thing is to try to stick with one ecosystem. Apple, for example, has a level of consistency even in their mid-range devices, like iPads (which are capable of very high-quality HDR displays).

In closing, it’s important to properly calibrate your monitors if you’re doing professional grading. And, again, we’ll plan a separate session covering that topic!

Meanwhile, thank you for listening and reading. I hope you’ll join us for the next session on August 23 at 1pm ET for a demo with Brandon Heaslip of Colorfront. Stay up to date with our Frame.io Live events right here.